Deploying a Secure Mini MapR Cluster with Docker on a Single AWS Instance

Introduction

If you want to try out the MapR Converged Data Platform to see its unique big data capabilities but don’t have a cluster of hardware immediately available, you still have a few other options. For example, you can spin up a MapR cluster in the cloud using multiple node instances on one of our IaaS partners (Amazon, Azure, etc.). The only downside is that with multiple node instances, the costs can add up to more than you want to spend for an experimental cluster. You also have the option of experimenting with using the MapR Sandbox. The limitation, though, is that it doesn’t give you a true multi-node cluster, so you can’t fully explore features such as multi-tenancy, topologies, and services layouts.

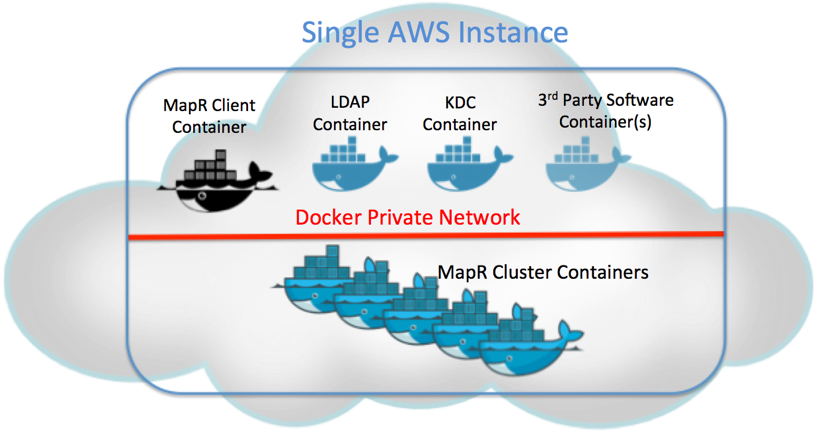

As another option, you can leverage Docker technology with the AWS CloudFormation template to spin up a multi-node MapR cluster in a single virtual instance. There are options to set up a non-secure, secure, or Kerberos-enabled (“Kerberized”) cluster, so you can explore the full feature set that the MapR Platform offers. An LDAP service container is set up to provide centralized directory lookup across the cluster, while a KDC service container provides tokens for cluster authentication in a Kerberized cluster. Additionally, a MapR client container is used to better simulate a production environment. Customers can also install their software in separate containers alongside the cluster in the same instance. This is a very cost-effective way of spinning up a true multi-node MapR cluster on the cloud.

Since containers are highly disposable, it is really easy to reinstall the cluster if you want to try out different things, like a PoC, a demo, or a training environment. The image below shows what the deployment might look like:

To get you started, the rest of this blog post will walk you through how you can get a mini MapR cluster up and running on AWS in less than 30 minutes.

There are 4 main steps involved:

- Spin up an AWS instance using the CloudFormation template.

- Log in to the instance and execute the MapR deployment script.

- Apply the trial license (optional).

- Start exploring the cluster.

Spin up an AWS Instance Using the CloudFormation Template

- Login to the AWS portal. If you don’t have an AWS account, you will need to create one. If you already have one, then you can log in to the console.

- Switch to one of these regions: US West (Oregon), US East (Virginia), Asia Pacific (Tokyo), Asia Pacific (Sydney), EU (Ireland) or EU (Frankfurt).

- Download this CFT from here: https://raw.githubusercontent.com/jsunmapr/AWS-CFTs/master/520/MapR520-community-Docker.template

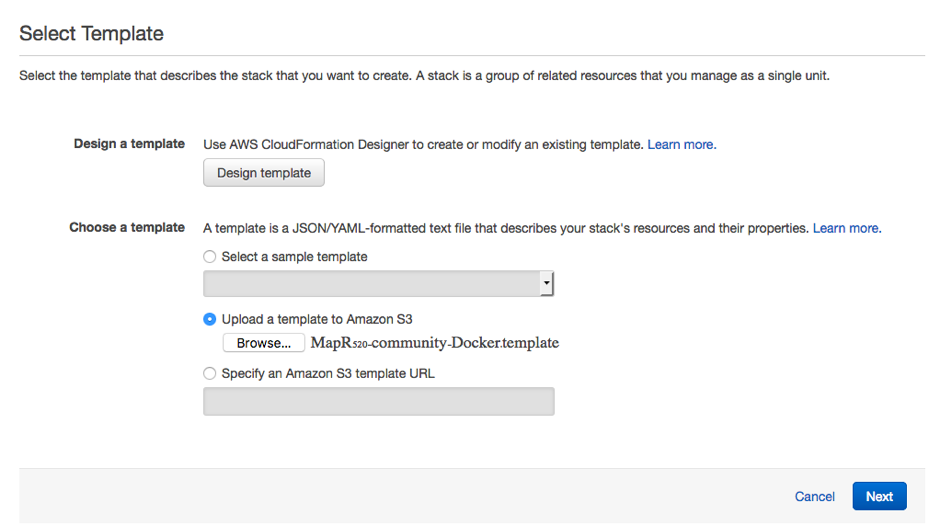

- Select “CloudFormation Template” on your AWS portal. Then select “Create Stack” -> “Upload a template to AWS S3” -> “Browse,” select the template you just downloaded from above step, then upload.

- Follow the instructions to launch a MapR cluster.

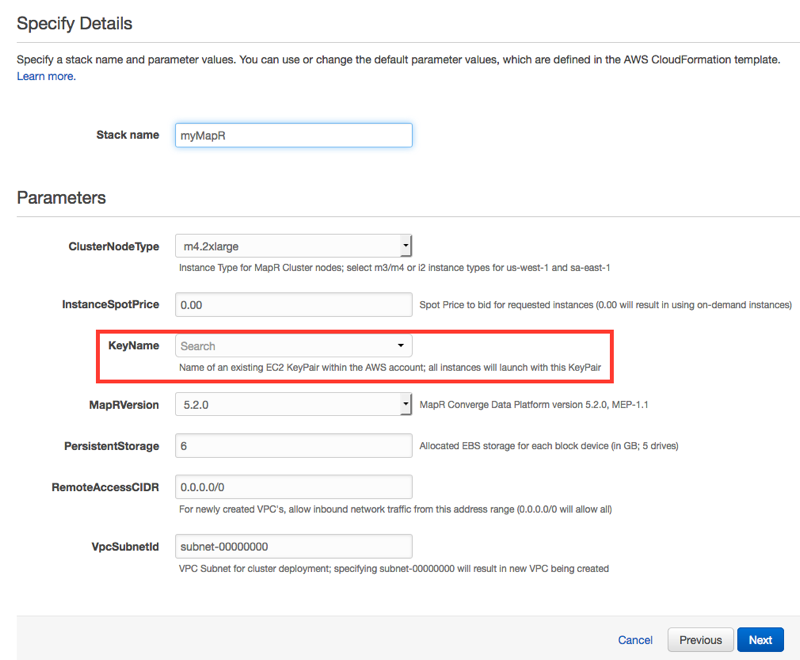

Fill in the stack name, and select your key at the minimum.

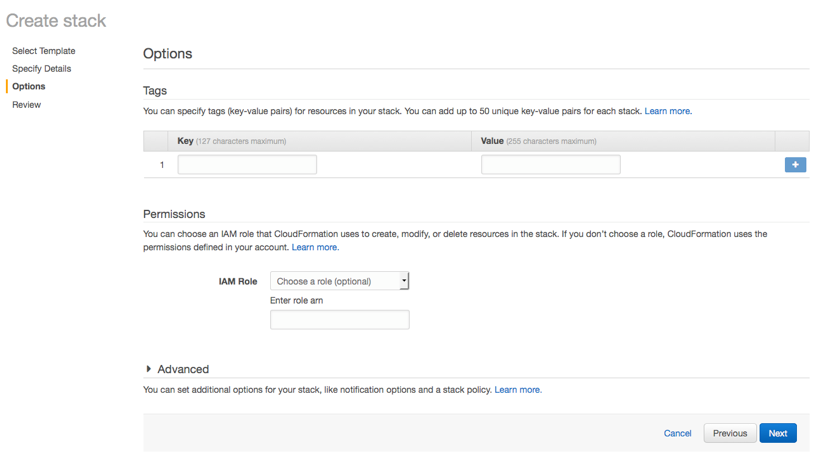

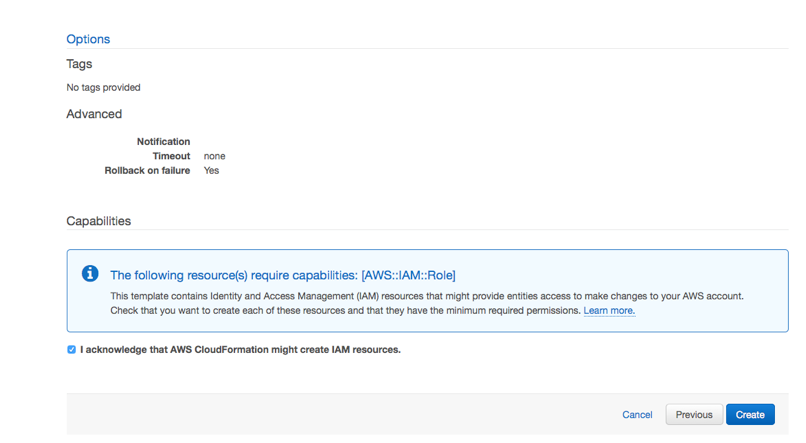

The items on the below page are all optional. Leave them blank.

Check the agreement box, and hit “Create.”

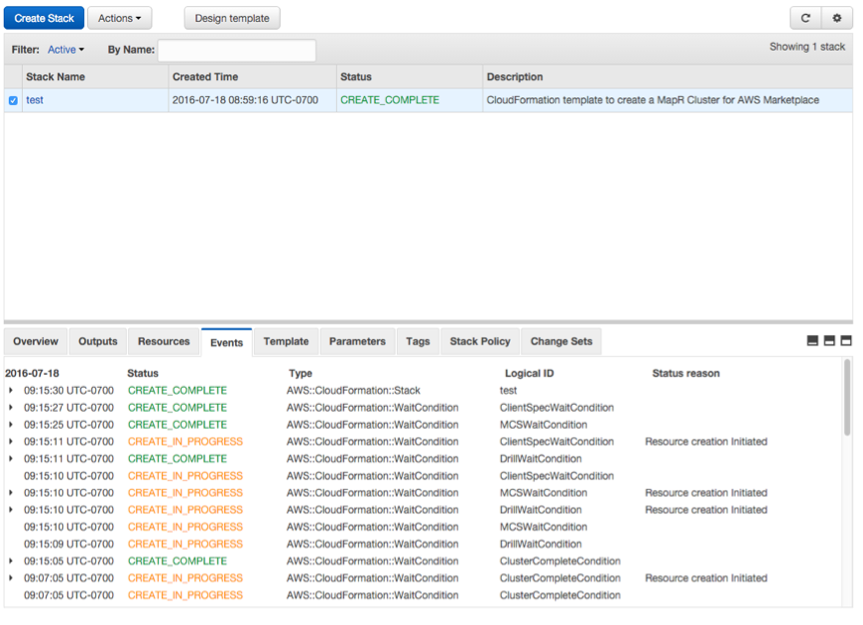

If all goes well, you should have a cluster deployed successfully.

Login to the Instance and Run the MapR Deployment Script

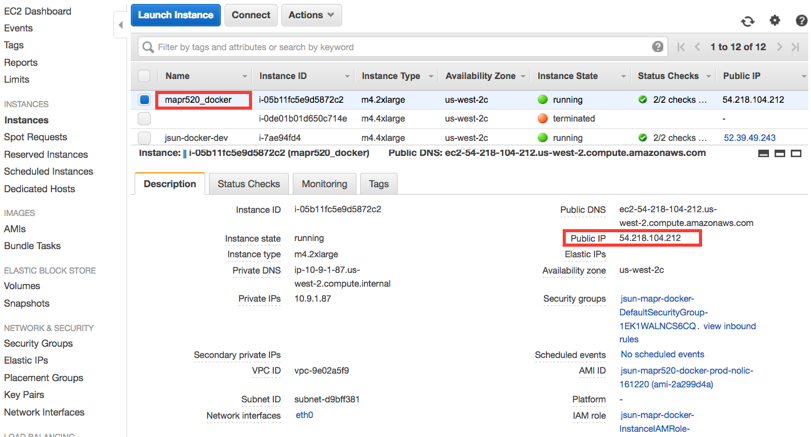

- Find the external IP address of the newly spun up instance by going to the EC2 portal. Locate the instance named “mapr520_docker,” and its IP address should show up.

- Wait until the status of the instance changes from “Initializing” to “2/2 checks,” then ssh into the instance by issuing the command “ssh ec2-user@<IP address>” from your computer.

- Once you are in the instance, issue this command to deploy the MapR cluster: “sudo /usr/bin/deploy-mapr” You will be prompted to answer several questions, which should be self-explanatory, but if you are not sure, then leave the default answers. Write down these selections because you will need them later. It will then walk you through the setup process interactively. In 20–30 minutes, you should have a cluster up and running.

- If you made a mistake, don’t worry. Just repeat the previous step to reinstall. Also, if you want to try a different secure mode, simply rerun the previous step – the old cluster will be deleted, and a new cluster will be deployed.

Apply the Trial License (Optional)

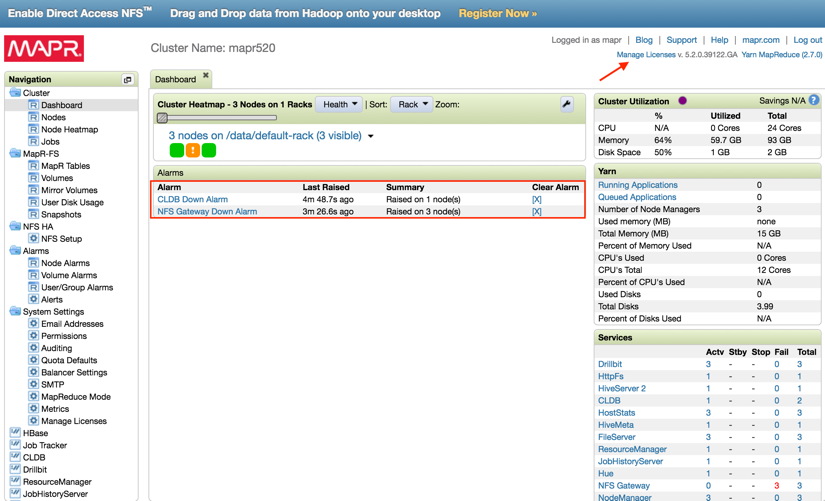

At this point the cluster is functional, you can start to explore the MapR cluster, even without a license. However, in order to take advantages of capabilities such as HA, NFS gateway, and others, you will have to apply a 30-day unlimited trial license.

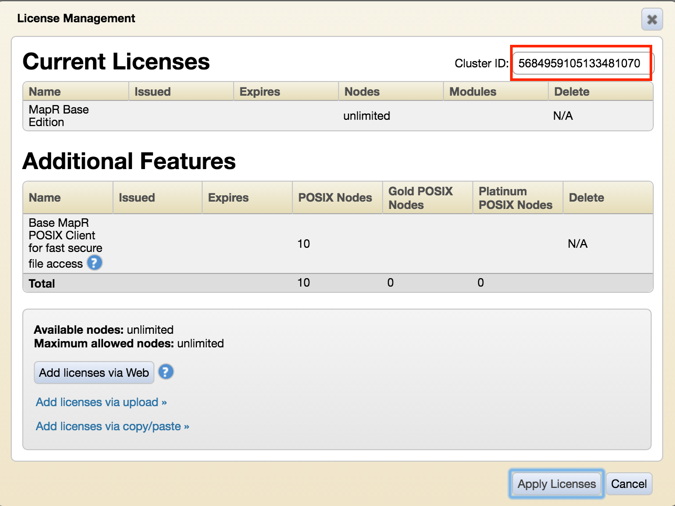

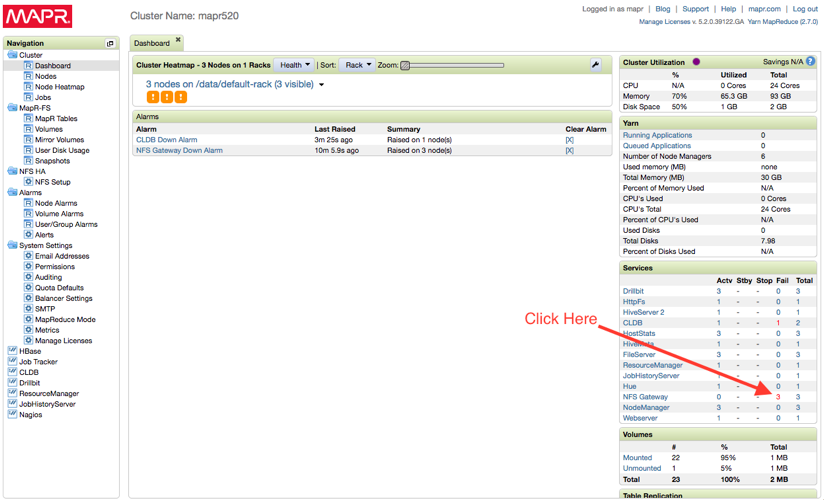

- Point your browser to the MapR Control System (MCS) page: https://<IP address of the instance>:8443, login as the admin user, and input the password that you assigned in the previous step. Click on the “Manage Licenses” tab on the upper right corner. Copy the cluster ID.

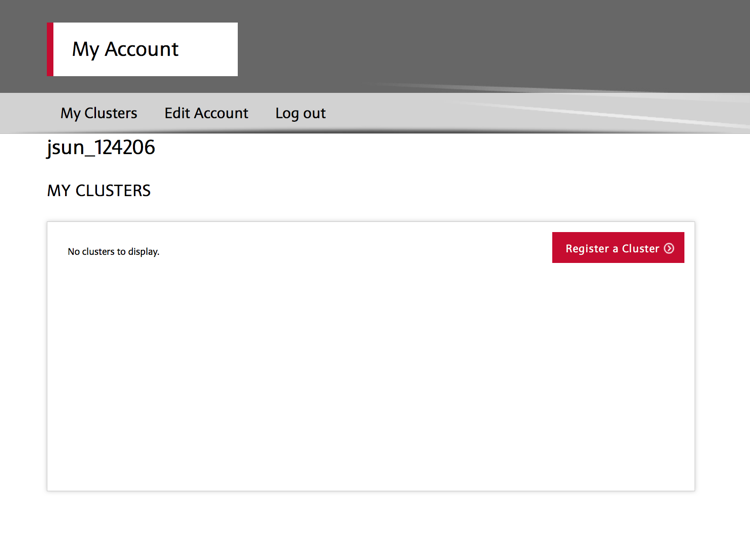

- Now go to www.mapr.com and register an account with MapR (the login link is near the upper right corner of the home page). After you are logged in, select “My Clusters” tab and click “Register a Cluster.”

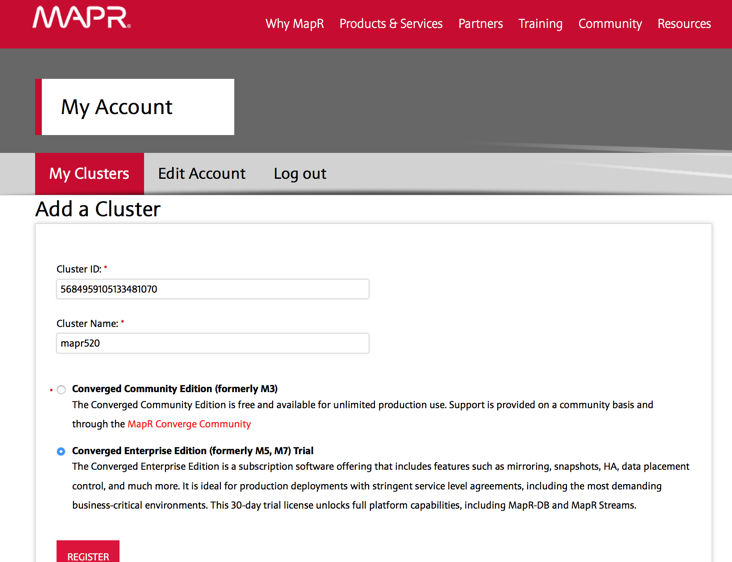

- Fill in the cluster ID and cluster name and hit “Register.”

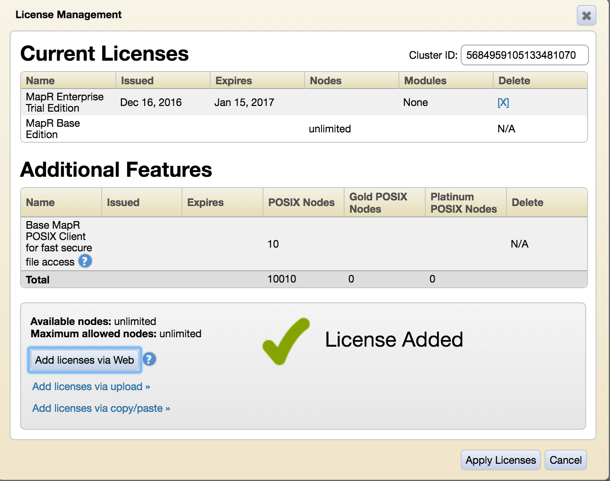

- Now go back to the MCS page and click “Add license via Web” to apply license.

- Once the license is applied, you can now start the NFS gateway services on the MCS portal.

Start Exploring the Cluster

The mini cluster comes with some sample scripts/data included to get you started. To start exploring, you first have to log in to the client container.

- Go back to your instance shell prompt. Type “sudo ent” to get into the client container.

Example:

#ent CONTAINER NAMES 5af123c10715 mapr-client d078b54a942f mapr520-node0 2b414d3d0d2a mapr520-node1 47c73d048f35 mapr520-node2 bd393b70ae8e kdc 546c8efc27e1 ldap Which containter you want to enter? 5af123c10715 [root@mapr-client /]#

- Now let’s become the ldap user; note that the ldap username doesn’t exist in the local /etc/passwd file.

root@mapr-client /]# su - ldapdude Last login: Tue Dec 20 22:57:06 UTC 2016 [ldapdude@mapr-client ~]$

- If you have a secure cluster (MapR ticket), you should use the maprlogin command to obtain a maprticket, or you won’t be able to access the filesystem.

[ldapdude@mapr-client ~]$ maprlogin password [Password for user 'ldapdude' at cluster 'mapr520': ] xxxxxxx MapR credentials of user 'ldapdude' for cluster 'mapr520' are written to '/tmp/maprticket_5000'

- If you have a Kerberized cluster, you should use the kinit command to obtain a Kerberos token.

[ldapdude@mapr-client ~]$ kinit Password for ldapdude@EXAMPLE.COM: xxxxxxx [ldapdude@mapr-client ~]$ hadoop fs -ls / 16/12/21 04:33:34 INFO client.MapRLoginHttpsClient: MapR credentials of user 'ldapdude' for cluster 'mapr520' are written to '/tmp/maprticket_5000' MapR credentials of user 'ldapdude' for cluster 'mapr520' are written to '/tmp/maprticket_5000' Found 7 items drwxr-xr-x - maprdude maprdude 1 2016-12-20 22:34 /apps drwxr-xr-x - mapr mapr 0 2016-12-20 22:32 /hbase drwxr-xr-x - mapr mapr 0 2016-12-20 22:34 /opt drwxr-xr-x - root root 0 2016-12-20 22:34 /tables drwxrwxrwx - mapr mapr 2 2016-12-20 23:01 /tmp drwxr-xr-x - root root 4 2016-12-20 22:34 /user drwxr-xr-x - mapr mapr 1 2016-12-20 22:32 /var

- To get started with Apache Drill, go to /opt/data/drill. Example:

[ldapdude@mapr-client ~]$ cd /opt/data/drill [ldapdude@mapr-client ~]$ /opt/mapr/drill/drill-1.8.0/bin/sqlline -u jdbc:drill:zk=mapr520-node0:5181,mapr520-node1:5181,mapr520-node2:5181/drill/mapr520-drillbits -f review.sql

- To get started with Spark, check out Carol McDonald’s blog post to analyze Uber data.

Note that when the cluster was first started, the processing speed could be a bit slow depending on the AWS region you were in. But due to Docker’s caching capability, you will find that the speed will increase over time.

Summary

The mini MapR cluster is an excellent way to experience a production-like environment for the MapR Converged Data Platform. It can be secured (with or without Kerberos). It has a separate client container and can be integrated with your choice of 3rd party software. And it gives you the full feature set that the MapR Platform has to offer without having to spin up multiple node instances in the cloud.

| Reference: | Deploying a Secure Mini MapR Cluster with Docker on a Single AWS Instance from our JCG partner James Sun at the Mapr blog. |