Demystifying the Event Driven Architecture – An open solution (part 3)

High throughput, resiliency, scalability and speed—are you searching for a way to leverage microservice integration to handle all the event-driven communications in your growing architecture landscape

Search no further.

This series of articles guides you through the world of integration using microservice architecture and specifically explores the world of Event Driven Architecture (EDA). It’s a central story to organizations moving forward into the digital world and is worth exploring as part of your strategy for continued success.

The first article was introducing how EDA might be the right choice for your microservice integration solutions, with a more detailed examination of when you might not need EDA at all. The second article pivoted back to exploring use cases aligning to EDA solutions and presenting real world examples. This last article looks at the open technologies that can help you to implement an EDA architecture.

An open architecture

The ideal use of EDA as an integration architecture has its foundations in solid business advantages. It’s providing you with the ability to react to the continuously changing markets you operate in at near real-time. Data communications having been reduced to must milliseconds gives you abilities to make informed decisions based on up to date information across your enterprise systems. With systems now delivering on big data scalability with an EDA architecture, you’re able to ensure reliable communications with operational integrity ensuring even less downtime.

So how does this look in an open EDA architecture?

In this case the focus of open is leveraging open technologies for a flexible EDA architecture. Using open technologies allows for selection of best practices, most effective, standards aligned, and widely recognised solution pieces for your architecture.

One of the core technologies currently being leveraged for open EDA architectures is Apache Kafka, which delivers the integration layer for building real-time data streams to capture your events. Not only can you develop streaming applications, but it’s also providing the infrastructure to enable your development teams to create scalable stream processing applications. It’s powerful capability to store streaming data safely in a fault-tolerant environment completes the requirements met for most organizations today implementing an EDA architecture.

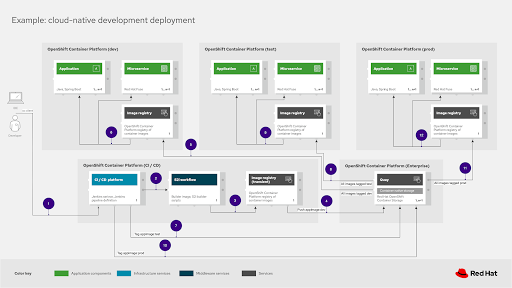

When looking to modern cloud-native development and deployments, containers remain an essential component of any architecture. Combining Kafka with a container platform is essential to help with scalability, microservices, automation, and operations needs across fully automated cloud-native deployments into production.

While there are many views on how to design an EDA architecture, for now there are such different views and many of the decisions you need to make rely on existing architectural limitations. Maybe you have to deal with existing components that prevent full open EDA architecture adoption, but nothing prevents a hybrid path on the way to eventual open EDA architecture.

In this case, it might be interesting to look closer at integration across all your channels when engaging your customers such as discussed in the omnichannel architecture blueprint series. Another interesting aspect of any modern EDA architecture is examined in the cloud-native development blueprint series.

What’s out there for you?

This article completes this series and hopefully helps you with positioning and understanding how important EDA is and how it could play a role in your solution architectures.

If you’re interested in exploring EDA solutions using open source technologies, then take a look at getting started with event-driven architecture using Apache Kafka or this free e-book on designing event-driven applications.

Published on Java Code Geeks with permission by Eric Schabell, partner at our JCG program. See the original article here: Demystifying the Event Driven Architecture – An open solution (part 3) Opinions expressed by Java Code Geeks contributors are their own. |

Look at AxonIQ – vastly superior to rolling your own w/ Kafka.