Yet Another Way to Learn Recursion

Every semester, I pass around a survey to get some feedback on my teaching. This past semester someone finally gave me an idea for a new article. In particular, they wanted to learn some more about recursion, so I figured I’d put together some tips.

Recursion Overview

For those of you that might be learning recursion for the first time, I figured I’d provide a bit of an overview of the concept.

In particular, recursion is a problem solving technique that relies on solving smaller subproblems. In other words, instead of solving a problem directly, we continue to break the problem down until we get to some smaller problem that we can actually solve. Then, we use the answer to the smallest subproblem to work our way backwards until we have the answer to our original question.

For example, let’s say we want to compute 26. Normally, we would break this down into repeated multiplication. In other words, we would take 2 and multiply it by itself 6 times to yield 64. In Computer Science, we call this problem solving technique iteration, and we usually see it in the form of a loop:

1 2 3 4 5 6 7 | def iterative_power(base, exponent): product = 1 for i in range(exponent): product *= base return productprint(iterative_power(2, 6)) |

Of course, what if we already knew the answer to some smaller subproblem. For instance, what if we knew that 25 is 32? Then, we could directly compute 26 by multiplying 25 by 2. That’s the idea behind recursion:

1 2 3 4 5 | def recursive_power(base, exponent): if exponent == 0: return 1 else: return recursive_power(base, exponent - 1) * base |

In this article, we’ll take a look at a different way of thinking of recursion. Hopefully, it helps you understand the concept better than before.

Why Is Recursion So Hard?

While functional programming is currently making a comeback, it hasn’t had a huge impact in the way we learn to code. As a result, most folks start coding with a C-like imperative programming language. For example, I got started with Java and moved into languages like C, C++, C#, and Python.

Unfortunately, the downside of learning languages like C is that we’re sort of constrained in how we think about problem solving. In other words, the tool set that is provided to us in these languages is largely biased toward branching (i.e. if statements and loops).

As a result, the intuition behind recursion isn’t built in from the start. Instead, we’re forced to introduce recursion using contrived examples that tie back to iterative solutions. Otherwise, how else would we relate.

In addition, imperative languages like C require a lot of overhead before recursion can be introduced. For instance, you probably had to learn concepts like variables, branching, and functions before you could even start thinking about recursion. In fact, I wasn’t personally introduced to the concept until my first data structures course.

Altogether, it can be hard to grasp recursion because it requires your understanding of coding to be turned on its head. Luckily, there are ways to break the cycle (pun absolutely intended).

Learning Recursion Through Design by Contract

Recently, I was training to teach a software components course at The Ohio State University, and one of the topics they covered in that course was recursion. Of course, I was already quite familiar with recursion at the time, but I thought the way they taught the subject was really elegant. As a result, I figured I could pass that method on to you. That said, we’ll need to tackle Design by Contract first.

Introduction to Design by Contract

I apologize if I’m repeating myself a bit as I have written a bit about Design by Contract in the past—mainly in reference to JUnit testing. Naturally, I think I’m a slightly better writer now, but feel free to refer to that article as well.

At any rate, Design by Contract (DbC) is a programming philosophy where we think about functions in terms of contracts. In other words, we want to provide some sort of guarantee to our users when they use one of our functions. In particular, we ask our users to execute each function under certain conditions (aka preconditions). As a consequence, we promise that each function will behave a certain way (aka postconditions).

DbC is important in recursion because it allows us to specify exactly what a function will do under certain conditions. For example, the power functions we defined earlier work only as long as we specify a few preconditions:

- Both parameters must be integers (could be taken care of with some type hints)

- The

powerparameter cannot be negative

As a result, we promise that both functions will return the base raised to some power. If a user enters invalid inputs, we don’t make any promises as they’ve clearly broken the contract. Of course, we can always provide some sort of input verification to prevent nasty bugs, but that’s outside the scope of DbC.

Free Lunch for All

At OSU, we introduce recursion by ignoring the idea of recursion altogether. Instead, we leverage what we know about Design by Contract to start implementing recursive functions implicitly.

For example, let’s take another look at this power function we keep talking about:

1 2 3 4 5 6 7 | def power(base: int, exponent: int) -> int: """Computes the base ^ exponent. Precondition: exponent >= 0 Postcondition: base ^ exponent """ return FreeLunch.power(base, exponent) |

In this example, we introduce a new power function from a magical library called FreeLunch. As far as we’re concerned, this new power function is exactly the same as the one we have written—same exact contract.

Now, for the sake of argument, let’s say that this FreeLunch power function has a requirement: its input must be “smaller” than the input of our power function. What can we do to ensure this works?

Well, if we decrease the exponent, we can add it back by multiplying the base (i.e. x5 = x4 * x). Let’s try that:

1 2 3 4 5 6 7 | def power(base: int, exponent: int) -> int: """Computes the base ^ exponent. Precondition: exponent >= 0 Postcondition: base ^ exponent """ return FreeLunch.power(base, exponent - 1) * base |

At this point, how do we go about verifying that this works? Well, let’s try some inputs. For example, we could try 26 again. If we trace over the code, we’ll notice that we call FreeLunch.power(2, 5) which is a completely valid input. In other words, we’ll get 32 back which we multiple by 2 to get 64, the correct answer.

That said, are there any inputs that would fail? Absolutely! Since the FreeLunch power function shares the same contract as our power function, there are inputs which are invalid. For example, we should avoid any situation in which the FreeLunch power function is passed a value smaller than 0:

01 02 03 04 05 06 07 08 09 10 | def power(base: int, exponent: int) -> int: """Computes the base ^ exponent. Precondition: exponent >= 0 Postcondition: base ^ exponent """ if exponent == 0: return 1 else: return FreeLunch.power(base, exponent - 1) * base |

Now, we can be certain our power function works.

There Is No Free Lunch

As you can probably imagine, implementing the power function in this convoluted way was just an exercise to trick you into using recursion. In other words, we can completely remove the FreeLunch class above, and we’d have a fully functioning recursive solution:

01 02 03 04 05 06 07 08 09 10 | def power(base: int, exponent: int) -> int: """Computes the base ^ exponent. Precondition: exponent >= 0 Postcondition: base ^ exponent """ if exponent == 0: return 1 else: return power(base, exponent - 1) * base |

Now, the question is: why would we go through all this effort to introduce recursion? After all, almost every other recursion tutorial leverages some sort of stack. Why don’t we do that?

While it’s perfectly valid to teach recursion using the stack, it’s often quite cumbersome for students. For example, imagine tracing through the power function above without using our FreeLunch trick. If we provide two random inputs—say a base of 2 and an exponent of 6—we would have to trace through each power function call until we reached the smallest subproblem. On paper, that’s six traces for one simple example!

When taught this way, students can often find themselves getting lost in the recursion as they try various inputs on their program. If they were to instead suspend their disbelief and trust in the contract, they could prove their function works on a single pass. Of course, they’ll need to pay some attention to their inputs (see mathematical induction).

Recursive Roots

Now that we’ve seen some examples, I want to talk a little bit about where recursion comes from. After all, recursion is often this scary subject that seems to appear when convenient in Computer Science lectures. Luckily, it has a much more interesting history than that.

Remember how we looked at a power function previously? That was no accident. In fact, there loads of mathematical formulas which can be written recursively. For instance, the power formula looks as follows:

an = an-1 * a (if n > 0)

Naturally, an can continually be decomposed until the exponent is either 1 or 0 depending on how we’d like to terminate our recursion. In our examples above, we ignored when n = 1 because n = 0 gives us 1 which works out fine. In other words, adding an extra case for n = 1 has no effect on the result.

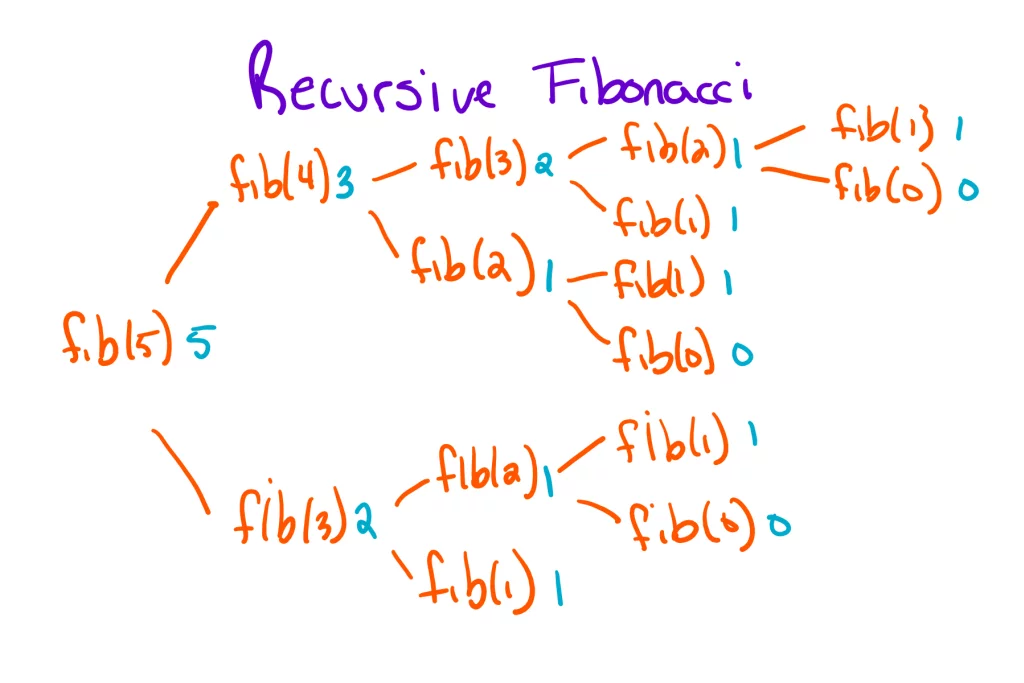

Like power, there are several other recursive formulas that you probably already know. For example, the Fibonacci sequence is defined by a recursive formula:

an = an-1 + an-2

To make sure this formula works, we have to define the first two terms. Then, everything works out fine. Depending on who you ask, the first two terms could be 0 and 1 or 1 and 1. Regardless, both pairs of numbers work.

If we want to then compute any arbitrary term in the sequence, we decompose the original function until we hit either of our base cases. For example, the following diagram illustrates how we would compute the fifth term in the Fibonacci sequence:

We can argue about the efficiency of a recursive algorithm like this all day, but this is a valid way to solve the problem. Instead of solving the problem in incremental steps, we break the problem down into smaller problems until we find a problem we can solve. Then, we use that result to work our way backwards until we have our final answer.

Recursive Structures

Now that we’ve looked at recursion through the lens of mathematics and Design by Contract, the question becomes: how do we recognize problems where recursion would be beneficial? In other words, are there problems that have some form of recursive structure? Let’s talk about it.

As it turns out, there are recursive structures all around us. For example, the Fibonacci sequence is explicitly defined as a recursive formula. Of course, there are other types of recursive structures. For instance, the tree diagram above is a recursive structure. After all, each call to “fib” creates yet another tree. In other words, there are trees inside trees.

Outside of technology, there are other examples of recursive structures like fractals which are collections of geometric shapes that retain their structure at any scale. For those of you familiar with the Triforce, it is a perfect example of a simple fractal: a triangle made of triangles.

Likewise, there are several everyday recursive structures like directories (folders containing folders) and onions (onions containing onions). In other words, hierarchical and nesting structures are usually good indicators of recursion.

From these examples, are there any sort of patterns we can recognize? Well, of course! The key here is to observe the structure of a problem. Is the problem itself made up of similar problems? If so, recursion is probably the way to go. If not, it might make more sense to try something else.

Famous Recursive Algorithms

With all that said, I think it’s helpful to put together a short list of famous recursive algorithms for inspiration purposes. Feel free to share some of your favorites in the comments:

- Merge Sort and Quick Sort

- Factorial, Power, and Fibonacci

- Greatest Common Divisor

- Tower of Hanoi

- Tree Traversal

- Depth First Search

And with that, I think we’ve covered about everything. If you have any questions that weren’t covered in the article, feel free to direct them to the comments below.

See Recursion

If you want to learn more about recursion, I recommend checking this really awesome article called Yet Another Way to Learn Recursion (sorry, I had to make at least one recursion joke). Otherwise, thanks for stopping by!

If you’d like to stick around, I have plenty of other articles for you:

- The Difference Between Statements and Expressions

- Rock Paper Scissors Using Modular Arithmetic

- Be Careful with String’s Substring Method in Java

Once again, thanks for your support. Every little bit goes a long way!

Published on Java Code Geeks with permission by Jeremy Grifski, partner at our JCG program. See the original article here: Yet Another Way to Learn Recursion Opinions expressed by Java Code Geeks contributors are their own. |