Why Docker containers are not used widely in Enterprises?

I am a great admirer of Solomon Hykes, whose company dotCloud seed financed by Trinity Ventures, went south and was on the verge being sold for peanuts. Then Solomon and close collaborators did something crazy: they decided to open source the container technology they had built for dotCloud.

His investors were against it, yet Dan Scholnick , an open minded VC investor from Trinity wrote:

Thanks to Solomon and his team, we have a business called Docker that’s on fire and changing the world of software infrastructure….

Solomon Hykes is an exceptional entrepreneur. None of the success Docker is having today would have been possible without his insight and courage, to say nothing of the technical accomplishments. He knew when to cut bait and when to go with his gut. No combination of good idea and great investors can accomplish anything without exceptional, visionary founders at the helm. And of course, Solomon couldn’t have done it alone.

You can make a Docker container after a 30 minutes tutorial with a Mac, Windows or Linux machine.

Yet in January 2015, lots and lots of people talked about Docker, some few people did some proof of concept, almost no one did production environment docker processing

Analyst ravings

Here is a sample from Forrester predictions for 2016 as described in this blog dated December 29, 2015.

Containers are all the rage!

Over the past year Containers such as Docker have generated tremendous interest and uptake among well-known cloud providers, who use them to deliver some of the largest and most popular cloud services and applications. Container adoption is being driven by the promise that containers deliver the ability to “build once and run anywhere”, allowing increased server efficiency and scalability for technology managers.

Perhaps we can say this at the end of 2016, But we are a few miles away from this vision to be reality. Please read this blog further and you decide what is the reality in December 2015

Security Concerns

One of the best recent articles on this issue is What can go wrong when security is ignored during development? by Filippos Raditsas Some things he says:

- New technologies expand the attack surface of a system.

- Attackers look at the new features and technologies as potential areas of weakness.

- A false belief that the utilization of certain technologies are more secure out-of-the-box solutions. This does not necessarily mean that a system will still be secure after a new technology is integrated.

- Early phases of the development life-cycle, such as analysis or design, where the requirements are gathered and the architectural components and technologies are chosen, may introduce security flaws.

For these reasons, the most effective solution is:

..exposing developers to security from an attacker’s point of view. More specifically, instead of just training them about secure coding practices, they should be guided through the discovery and exploitation of software vulnerabilities, as if they were the attackers.

The Rugged Manifesto

This group, exists since 2012 and helps bridge the gap between the developers’ community and the security community so that we can interact and collaborate more efficiently towards the common goal of reliable and secure applications.

Why Docker is still not widely used in Enterprises?

Here are a few quotes from December 4, 2015 blog of Julian Dunn a deep thinker, cool writer and seasoned coder.

This post is simply about the horrifying realization that containerization opens up a whole new playing field for folks to abuse. Many technology professionals in the coming decades will bear the brunt of the mistakes people are making today in their use of containers. Worse, these mistakes will be even more long-lived, because containers — being portable artifacts independent of the runtime — can conceivably survive in the wild far longer than, say, a web application written using JSP, Struts 1.1 and running under Tomcat 3.

Here are the reasons:

Using containers without really understanding why

“The reason that “Docker Docker Docker” is such a meme is because as with any new technology, there are large swaths of unqualified technology “professionals” ready and eager to rub it all over everything. There’s no shortage of developers in startups insisting that Docker is the “future” and that it must be used for everything.

Blindly containerizing legacy applications

“.. jamming legacy applications into containers. This makes no sense. Containers are designed for per-process isolation, ergo, one process per container. Containers aren’t going to be particularly useful for running Oracle 12c or SAP HANA that have a million processes. You could containerize them, but why? If a service is not something you’ll be ripping and replacing frequently, there’s really no point.

I also see container technologies being used to keep legacy applications alive forever, which is even more terrifying. We can therefore expect to still be running today’s applications, inside containers, in 2050 or beyond.”

Ignoring devops principles

In a containerized world, however, it gets way worse for teams that haven’t adopted devops practices. Now, these application teams aren’t just throwing Java bytecode over the wall; they’re throwing entire machine images. Think of all the things that can go wrong on a Linux system and how much maintenance needs to be done to keep it running smoothly, like security patching, rebooting, performance tuning, and so on. Now apply all that work to a microservices world, where you could have tens or hundreds of thousands of containers — which are really full-blown Linux systems — running in production. Keeping the lights on will become a nightmare without good cooperation and shared responsibility between dev and ops.. This will come down crashing down on both groups when production failures occur.

Lack of standard quality assurance principles

How are enterprises to know that the image functions correctly? Or is compliant, or secure? Or that it will continue to be that way even if changes are made? Until container adopters realize that everything they learned about ensuring safety in the delivery of software products doesn’t get thrown away in a container universe, we can expect a lot of buggy, insecure applications being deployed to production or pushed to the Docker Hub where, like a virus, they will get used by others.

Giant container images containing all of userland

By default, most containers provide a full Linux system, with all of /bin, /usr, and so on. Too few people build base images, or start container builds from scratch (literally FROM scratch in Docker).

I believe that the “large attack surface” is a fundamental design problem with containers being an evolutionary, not a revolutionary step from VMs and bare metal. Container technology has been so successful purely in its ability to efficiently de-duplicate and package an entire Linux userland in a portable, run-anywhere image. But the application process still believes it is running inside a full multi-user Linux OS

Conclusions

“Technologies last a very long time. Mikey Dickerson of the U.S. Digital Service spoke recently about how Medicare still primarily runs on 7000 COBOL jobs written piecemeal over the last 30 years. Nobody has a complete map of the dependencies between these jobs, or where to even start replacing them. Because patterns are so commonly copied, it’s important that for future generations, we try to minimize the bad solutions and make more good ones.”

Sadly, technology hype creates a reality distortion field that stops people from clearly assessing whether their use of that technology is a) appropriate and b) architected well,

Apcera Docker Solution

Josh Ellithorpe from Apcera presented this demo on DockerConEU. What is Apcera? The best intuitive description is in this short blog 10X Savings

What is Apcera Docker solution? IMHO it addresses many (if not all) the concerns of Julian Dunn. Apcera proposes a process implemented as part of their platform capabilities, Because containers vulnerabilities are a combination of human error, intuition and judgement on what is best for a container and what is not.

I use screen shots from Josh’s video to illustrate:

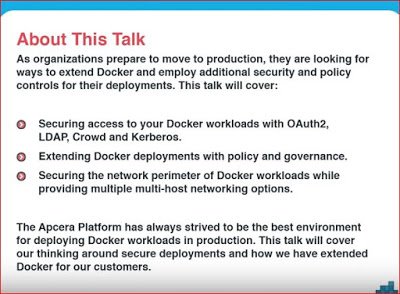

Enterprises moving to production are looking to extend Docker security and policy control using three bullets from the picture

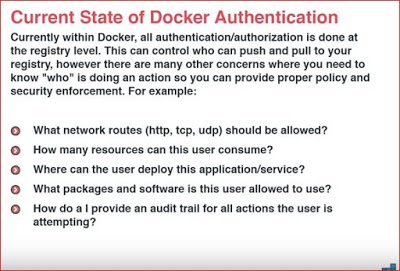

In this 2nd slide, Apcera recommends ad-ons for Docker answering specific questions that Docker so far does only at registry level.

If my name is Mr. Container, then is natural for me to ask “What route am I allowed to take?” “How many resources I can use?” “Where am I allowed to land and deploy?”, “What software package shall I use – and which ones I should never never touch?” “How someone always knows what I am doing, who can help and correct my mistakes before it is too late?”

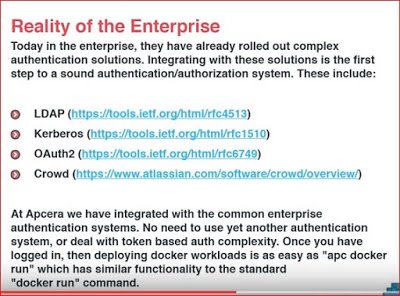

The first symptom of enterprise suspicions is asking them to use other tools for authentication, different from what they use now. So Apcera has a new command “apc docker run” to replace “docker run”. It uses all the authorization tools Enterprises are familiar and trust them

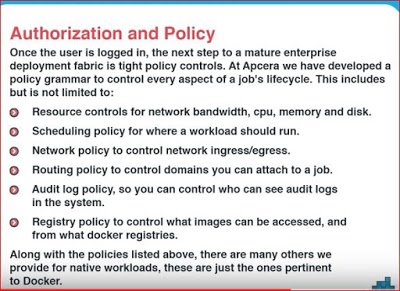

This last slide lists a sample of essential policies Apcera can provide for native workloads pertinent to Docker

The work that Apcera has done in collaboration with FlawCheck to test Docker images for known vulnerabilities before deploying them in production is described in this webinar

After reading Julian Dunn blog, I can see how Apcera can minimize the Enterprise fear of doom and instill trust when deploying Docker or any other new technology.

If they are more companies like Apcera, then Forrester prediction (see above) will become an instant reality.

I am familiar with Apcera, but if you know of similar companies, I will be glad to hear from you. I know Docker acquired a few companies, like Tutum, and I interviewed Borja Burgos its’ former CEO in February 2014 (see Tutum is set to dockerize the Enterprise. ). However Docker has so many directions to focus that the elegant Apcera solution is still my #1 preference.

| Reference: | Why Docker containers are not used widely in Enterprises? from our JCG partner Miha Ahronovitz at the The memories of a Product Manager blog. |