ARM Virtualization – Applications (Part 4)

In the last few posts we discussed the hardware support needed to provide virtualization. In this post how virtualization can empower the user. We’ll discuss the use cases we already see in the server and desktop space, and mobile specific applications like big.LITTLE and lowering production costs for handsets. The first post in this series had an overview of virtualization. The second post went deeper into the features added to support virtualization in the core. The third post talked about support needed at the system level for virtualization. This post will focus on use cases of virtualization.

Server Space Applications

The biggest impact of virtualization has been in redefining the modern datacenter. Virtualization provides fault tolerance, VM migration, sandboxing. Without virtualization we wouldn’t have services like EC2 that are powering many of the world’s biggest websites. With ARM and Intel rattling sabers for the last few years and the release of the Atom processor line by Intel, it was only a matter of time before ARM had to make a play for the server space, and to do this ARM needed a competing offering in virtualization. This is the biggest reason for providing hardware support for virtualization.

Using Cross Platform Applications

Many of us already use virtualization for this purpose. You can run a different OS on top of the current OS, or just run select services to support the current application. There can be extensions to this idea. A thin OS can be packed with an application to provide compatibility while running on a variety of platforms, similar to running a Java application but not requiring binary translation.

big.LITTLE

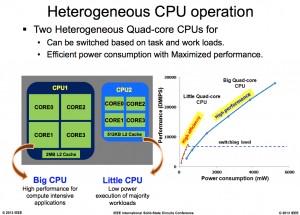

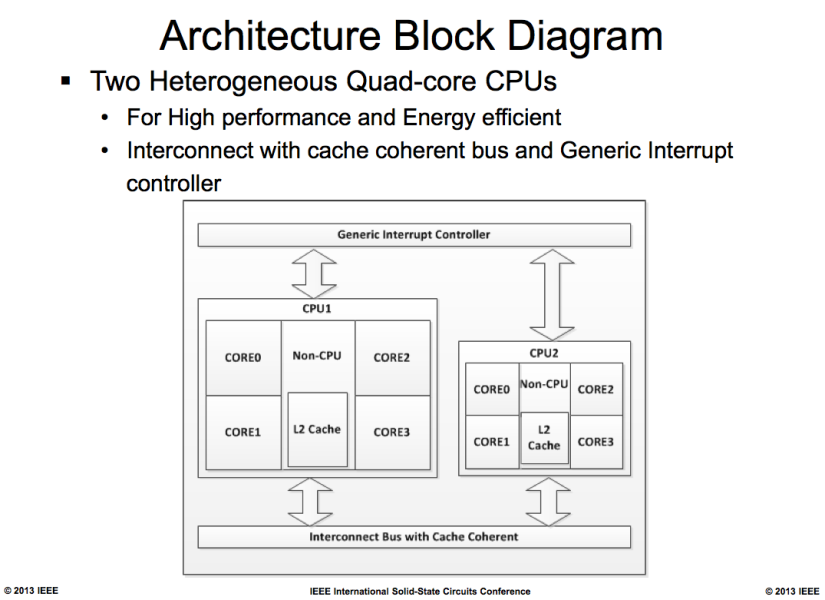

You may have heard of Samsung’s Exynos 5 Octa SoC. The highlight of this SoC is it provides a cluster of four high performance, high power Cortex-A15 cores and a cluster of four low power but low performance Cortex-A7 cores. Most of the time the performance requirements for a cell-phone can be met with the low power Cortex-A7 core. At this time the high power Cortex-A15 cores are powered down. Although this migration can be done without virtualization. Although with virtualization this feature naturally falls into VM migration and is significantly easier to implement in software.

Figure below courtesy of Anandtech gives an idea of the potential power savings from a big.LITTLE configuration. Although this slide gives an idea of the power and performance tradeoffs a few points need to be made about the methodology. The first is the tradeoff we’re measuring is not power vs performance. It is energy vs performance. The energy consumed for completing a task is average power divided by performance for that task provided the core can enter a low power mode once the task is complete.

The second thing that caught my eye in that graph was the use of Dhrystone as a benchmark, as indicated by using the unit DMIPS on the y-axis. Dhrystone is an old synthetic benchmark that no longer reflects real world usage. For example the entire workload fits in the L1 cache so it does not see any performance benefits from the larger L2 cache in the Cortex-A15, yet it also doesn’t reflect the increased power consumption from the L2 cache. There is no right or wrong answer to what is the correct benchmark to use. It always depends on the particular use case, but using SPECint would have been a more realistic indicator of system performance and power consumption.

Separating OS Kernel From Device Drivers

Virtualization can be used to separate the operating system from the underlying hardware. This separation can be done using one of the methods mention in the last post. This separation has several practical applications. The first would be reducing development time and costs by making it easier to port an OS to a newer device. But the more important advantage would be in rolling out OS updates. This is a commonly seen problem with Android. After an Android update is rolled out, each of the device manufacturers have to port the changes to their system. This significantly slows down the delivery of updates. Separating the OS from the underlying hardware can speed up the processing of rolling out the updates.

Separating Business From Pleasure

Our cellphones have become the biggest extension of our identity. Our cellphones have our contacts, our photos, our daily schedules, our emails, our text communications. This mixing of personal and business information can cause problems. Right now we give IT administrators extensive control over our personal information. If IT believes my phone is stolen they have the authority to issue a remote wipe of it. On the flipside IT is still not able to protect corporate data appropriately. For example, they cannot I can still install a malware app on the system that can compromise the data stored on my phone. This problem can be solved by creating separate VMs for work and personal usage on phones. So compromising a personal VM does not affect the corporate data. This point is discussed in this blog post by ARM (video below):

Allow Different OSes On Same Device

Going back to reducing R&D costs. Manufacturers can also create a single handset that runs different OSes, say Android or Windows Phone 8. Devices sold can come preloaded with installers for both OSes and on first time startup the user can choose what OS they want to use. This would reduce development and deployment cost while causing a slight waste of Flash memory. But the idea can be extended further. A try before you buy policy can be implemented allowing a user to use a different OS than they’re used to and if they don’t enjoy the experience they can revert to the system they are familiar with.

Security Features

The hypervisor provides a layer of privilege higher than the OS. The OS can be excluded from accessing regions of memory by the hypervisor. So the hypervisor can be used to store high security data like keys for financial accounts, hashes for checking application integrity, and yes DRM. While the geek in me protests at walling off parts of the system I paid for, the pragmatist in me accepts that these features improve the user experience of someone like my grandmother and enables features that wouldn’t be implemented otherwise. That concludes this post about the potential applications of virtualization. If you have any other ideas for applications of virtualization I’d like to hear about it in the comments. Next week I’ll conclude the series by doing a comparison of the ARM virtualization extensions vs the offerings in the Intel world.