ARM Virtualization Extensions – Memory and Interrupts (Part 2)

In the first part of this series, I introduced the topic of virtualization. Today I will venture deeper into the ARM virtualization extensions for memory management and handling of interrupts. Within the core, virtualization mostly provides controls over the system registers. But as we move further from the core, and start to communicate with the outside world, difficulties and nuances in the problem start to emerge and the need for hardware support for virtualization becomes apparent.

As a note, this post will gloss over parts of the ARM architecture. To go deeper into the details of the implementation you can check out the ARM Architecture Reference Manuals. These can be found at infocenter.arm.com but require a free registration.

“Virtual” Virtual Memory

Virtualization requires that the guest OS cannot have access to the hypervisor’s memory space. Without virtualization extensions, this requirement is enforced with a technique called “shadow page tables”. In this technique, the OS maintains its page tables but the hypervisor keeps the OS from setting the Memory Management Unit (MMU) registers appropriately. Instead, the hypervisor creates its own page tables with its own mappings. When it sees a page fault, it reads the page tables created by the OS, recognizes the address as being mapped by the OS, and creates the actual mapping for the page tables that is used by the hardware MMU. So only the hypervisor has the true Virtual Address (VA) to Physical Address (PA) translation rather than the guest OS. This approach has two problems: it unnecessarily complicates the hypervisor and has performance overhead.

In ARM Virtualization extensions, the above is performed by hardware. This simplifies the hypervisor while adding functionality and improving performance. In the ARM virtualization extensions, the hypervisor essentially sets the hardware to treat the “physical” addresses generated by the guest OS as virtual addresses and adds another level of translation on these addresses. The ARM terminology for the guest OS address is Intermediate Physical Address (IPA). The translation from IPA to PA is called a stage 2 translation and the translation from VA to IPA is called stage 1 translation.

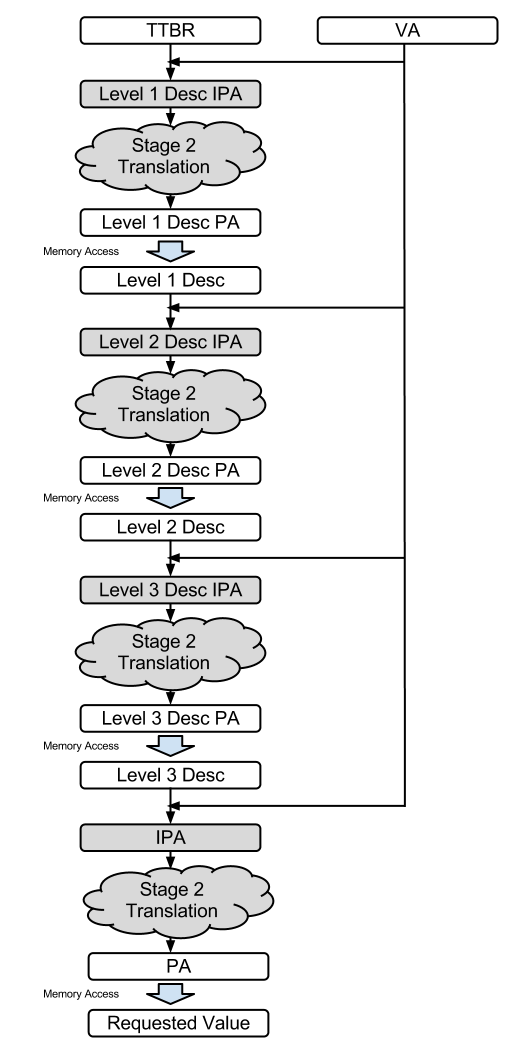

Figure shows how this translation proceeds.When virtulization is disabled, only stage 1 translation is performed. Stage 1 translation has 1 to 3 levels of tablewalks (depending on the page size, e.g, 4KB pages require 3 levels but 1GB pages require only one level). The first level uses the TTBR and the VA to create the PA/IPA for the first descriptor access. Subsequent levels use the descriptor and the VA to generate the address the of the next level descriptor, until the PA/IPA is known. The unshaded boxes in the figure show this process.

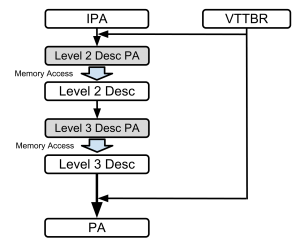

When virtualization is enabled, each of the translation levels has two stages. stage 1 works as described above, and outputs an IPA (intermediate physical address). stage 2 takes the IPA, and the VTTBR to create the descriptor address for the stage 2 tablewalk (as shown in figure below). Similar to the stage 1 tablewalk, the stage 2 tablewalk can have 1 to 3 levels.

That’s A Lot Of Descriptors

The indirection added by a second stage tablewalk can quickly get very expensive. Memory accesses are already the most expensive operation and with virtualization we may need up to 16 accesses to get the required data. Without virtulization, to reduce the cost of page tablewalks, the result of translations are saved in Translation Lookaside Buffers (TLBs). With virtualization, the cost of a tablewalk goes up significantly with the additional stage. In a processor with virtualization extensions enabled, not only do the TLBs need to be bigger, partial stages of the tablewalks are saved to accelerate the tablewalks.

You Shall Not Pass

The second stage of translation includes permission controls to fault to the hypervisor. This includes the standard controls for disabling any combination of, reads, writes or execution on a page table by the guest OS or the applications running on it.

Example Use Case

No access | Waiting on an external update |

Rd only | Lazy VM copy |

Wr only | output device |

All access | Normal execution |

All Memory Is Not Equal

The second stage also allows the hypervisor to modify the memory attributes for a particular location including marking it as device memory. Keep in mind that the ARM architecture only allows memory mapped I/O so isolating I/O operations can be done through the page table mappings. The general rule is that the final attribute of memory is going to be the more restrictive of the first stage and second stage attributes. For example, if the first stage marks memory as device and second stage marks it as normal cacheable, the processor treats the memory as a device memory, i.e., disables caching and enforces the ordering requirements for device memory. The hypervisor can also trigger a trap to the hypervisor when the guest accesses memory that is marked as device memory. The hypervisor can use this trap to communicate with the physical external hardware.

Virtual Interrupts

Dealing with device attributes is the first part of helping a guest OS communicate with the outside world. The next part is properly directing interrupts. The ARM architecture provides multiple ways for dealing with interrupts. The hypervisor can be configured for all interrupts to be redirected to the hypervisor. The hypervisor also has access to bits in the HCR for triggering a virtual interrupt in the guest OS. When an interrupt is asserted in the ARM architecture, the device holds the interrupt pin high until the OS tells the device to lower the interrupt pin. When running the OS, this protocol must be maintained. If the interrupt is triggered by the hypervisor using the HCR, a trap to hypervisor is needed to clear the virtual interrupt.

The hypervisor may handle the interrupt in concert with the Generic Interrupt Controller (GIC, pronounced “gick”). The GIC is implemented as a memory mapped I/O device handling the priority and distribution of all the interrupts coming into the system. Without virtualization, the OS communicates the completion of an interrupt to the GIC. With virtualization, the physical interrupt is rerouted to the hypervisor. The hypervisor determines the correct VM to redirect the interrupt to and sets up the corresponding virtual interface for the GIC. It also sets up the second stage page tables for the guest OS to map to the virtual CPU interface of the GIC. The GIC will from then on know how to reroute that interrupt and does not need hypervisor intervention.

Memory management and interrupt virtualization both scratch the surface of the problems involved with sandboxing a virtual machine and providing the guest OS access to physical devices. Next week we will discuss the extensions to the system to support virtualization.

Resources

Reference: ARM Virtualization Extensions – Memory and Interrupts (Part 2) from our JCG partner Aater Suleman at the Future Chips blog.