Common sense and Code Quality

This article takes a very simplistic and common sense approach to code quality. The intent is to demystify code quality and help project teams pick process and tool that makes sense to them.

Just to contain the scope of the article, I have restricted the rest of the discussion, to a Java / J2EE based technology project in an enterprise scenario. The basic definition of quality and ways to ensure that, should be similar in technology projects using other technology stacks and operating in non corporate world e.g. in open source arena.

Who should care about code quality?

Let’s start with a quick questionnaire:

- Do you deliver and / or review code written in Java?

- Do you manage / update / configure any 3rd party product written in Java?

- Do you contribute code in any java project which has legacy code?

- Do you contribute code in any java project which has sizeable number of classes (say more than 100) and you want to have a grasp on interdependence of those classes?

- Are you interested in assessing if there are structural issues in a given java project?

If the answer is yes to any / many of these questions, you should care about code quality.

The truth of the matter is that you might not have realized it yet and code quality (measuring, ensuring, delivering) might not show up as a distinct item in your role and responsibilities. But it is only a matter of time that it will catch up and cause grief if left unaddressed. It is much better approach to handle this monster proactively.

What is a high quality code anyway?

If you google it up or discuss this, you generally get two types of answers.

First type is generic *ity stuff (Flexibility, Reusability, Portability, Maintainability, Reliability, Testability etc.). While they are important, it is not always clear as to how exactly to measure them and how exactly to improve them.

Second type is highly specific technical parameters e.g. cyclometic complexity, Afferent coupling, Efferent coupling etc. There are well documented mathematical formulae to calculate these parameters, software that will calculate them for you, and relatively easy to get to a concrete actionable that will improve these numbers. However, converting the improvement in numbers to improvement in code quality remains a specialized skill.

So, net net, there is no easy answer. Let’s try to change that. Let’s put a series of questions that – from common sense – anyone in a team that writes / maintains high quality code base should be able to answer in affirmative.

Question 1: Are you confident that as you add new code, none of the existing, working functionality will break?

Do you / your team check in code? I think it is safe to assume, yes. Does an average developer of your team check in code more than once a day? Let’s assume, yes. Is it possible for an average developer of your team, on an average day, to know at the top of his head, what all other developers have checked in and how those code snippets are supposed to work? No. Even if you have all Newton and Einstein in your team, it is an emphatic no. So, how do you ensure that as the coders are frantically churning out code, they are not actually breaking more than they are creating?

The answer should be unit testing. Cover as much code as you can cover by unit test. ( If your answer is something else could you comment about it in the article, please? I would love to hear about your suggestion.)

Have an automated way of reporting to everyone in the team on the success of all unit tests every morning. If unit tests are broken, fixing them gets the highest priority for the day.

Also have an automated report to everyone in the team every morning reporting on the code coverage percentage. Ideally the code coverage percentage should increase in every report. At the very least it should remain same. If it goes down on any report, halt everything and investigate.

My common sense says that this has to be the most important code quality measure and process. ( Again, if you have a different opinion, please leave a comment.) Fortunately, sorting out this bit, is comparatively easy. Just use these toolset:

- Unit testing framework: JUnit, TestNG

- Unit test coverage tool: EclEmma, Cobertura

- A build tool: Maven, Ant

- A continuous integration tool: Jenkins, TeamCity

- A web dashboard for the report: Sonar

I am not saying this is the single / best answer. All I am saying, if you don’t have a better answer, this answer is easy, free and it works.

One note of caution. Many times, when teams start with this, someone or other googles around and finds out that good quality products are supposed to have 80% unit test coverage. In comparison the product turns out to be in a much worse state. This has many implications including morale and political issues. It is important to emphasize here that 80% code coverage in isolation does not guarantee anything. What is really important is to get a working process in place and continuously improve the test coverage.

Question 2: As you add new code, are you sure you are not committing the same silly mistakes that generally coders do? E.g. did you free up all resources in final block?

Anyone who codes commits mistakes. You are lucky if the compiler catches them for you and spits out a stack trace. But what about those that compiler does not catch but coding community knows from experience to be bad code. If you have worked on banking software a decade ago, the only way to catch the silly mistakes was by having someone senior from the team to review your code. Things have not changed much. You should still have an extra pair of eyes look at your code and design. But luckily there is some help as well. You could use this toolset:

- Any source code analyzer: PMD, Checkstyle, Findbugs, Crap4j

- A build tool: Maven, Ant

- A continuous integration tool: Jenkins, TeamCity

- A web dashboard for the report: Sonar

Again, I am not saying this is the single / best answer. All I am saying, if you don’t have a better answer, this answer is easy, free and it works.

One note of caution. Most of the projects which start with these are inundated with hundreds (if not thousands) of items flagged by these source code analyzers. It is very important to spend some time upfront with these tools and throttle the reporting. Fortunately it is very easy to add / delete rules to these source code analyzers effectively configuring these to report only what you / your team thinks is worthy of flagging. The trick is to ensure that the rules are relevant to your team and the reports are treated with utmost respect. It is no good if the tools keep reporting a bunch of issues and nobody in the team is either convinced that they are relevant or nobody is sure who is expected to fix them.

I will draw part 1 of this article to a close here. The first couple of questions that we have discussed in this article, I believe, are the most important. They should be taken up first by any technology project which sees value in having a handle on the quality of code. The next part will touch on advanced topics like structural analysis, mutation testing etc.

Structural Analysis

In this article about ‘code quality’ I am going to talk about ‘quality of the software structure’ in particular.

The theme of the sequence of these article takes a very simplistic and common sense approach to code quality. The intent is to demystify code quality and help project teams pick process and tool that makes sense to them.

I am going to try and keep the article as simple as I can. However, the audience be aware that this topic i.e. ‘structural analysis of software code’ has been and continues to be a fairly involved subject. Mathematicians and computer scientists have published seminal work on this subject as early as 1970s.

Fortunately, some excellent material is available on this subject in the public domain. I would particularly like to call out the following works that I have relied on heavily for data used in this article.

1. ‘A Complexity Measure’ published in IEEE TRANSACTIONS ON SOFTWARE ENGINEERING, VOL. SE-2, NO.46, DECEMBER 1976 by Thomas J. McCabe.

2. ‘A Metrics Suite for Object Oriented Design’ published in IEEE TRANSACTIONS ON SOFTWARE ENGINEERING, VOL. 20, NO. 6, JUNE 1994, by Shyam R. Chidamber and Chris F. Kemerer.

3. ‘ OO Design Quality Metrics, An Analysis of Dependencies‘ in 1994 by Robert Martin.

4. ‘ Design Principles and Design Patterns‘ published by Robert Martin.

Let me try and present the gist of my interpretation of these works, in the following sections.

Patterns Let’s start by enlisting the basic fundamental patterns of bad code structure. These are intuitive in nature and do not have a mathematical or scientific definition.

| Patterns of Structural Flaws | Definition and Explanation |

|---|---|

| Rigidity | The software is difficult to change. |

| Fragility | Making changes in one part of the software causes breakage in conceptually unrelated part of the software. |

| Immobility | It is difficult to move around components of the code as code is not sufficiently modular. |

| Viscosity | Wrong practices are so deep rooted in the software that it is easier to keep continuing with the wrong practices, rather than introducing the right practices. |

| Opacity | The system is difficult to understand. |

Matrices The matrices are concrete, measurable items, with scientific and mathematical definition. Standard tools are available, that will measure them for your code base. Of course the list of matrices or tools supplied here are not exhaustive.

| Matrices | Definition and Explanation | Tools |

|---|---|---|

| Number of Classes | If a comparison is made between projects with identical functionality, those projects with more classes are better abstracted. You would want to keep this number down. Following OOPs concepts efficiently should help. | Sonar (free, open source) |

| Lines of Code (LOC) | If a comparison is made between projects with identical functionality, those projects with fewer lines of code has superior design and requires less maintenance. | Sonar (free, open source) |

| Number of Children (NOC) | It is the number of immediate sub-classes of a class. Try to keep it down. Else classes become too complex. | Stan4J |

| Response for Class (RFC) | This the count of all methods implemented within the class plus the number of methods accessible to an object of this class due to implementation. Try to keep it lower. Higher the RFC, higher is the effort to make changes. | Sonar (free, open source), Stan4J |

| Depth of Inheritance Tree (DIT) | Maximum inheritance path from the class to the root class. Try to keep it under 5. | Stan4J |

| Weighted Methods Per Class (WMC) | Average number of methods defined in class. Try to keep it under 14. | Stan4J |

| Coupling between Object Classes (CBO) | Number of classes to which a class is coupled. Try to keep it under 14. | Stan4J |

| Lack of Cohesion of Methods (LCOM4) | It measures the number of ‘connected components’ in a class. A low value suggests that the code is simpler and reusable. A high value suggests that the class should be broken up into smaller classes. Try to break down the class if this matrix become higher than 2. | Sonar (free, open source), Stan4J |

| Cyclomatic Complexity (CC) | Measure of different executable paths through a module. Higher number of executable paths through the code means more effort to test completely. This is turn makes it more difficult to understand and change. Try to keep this value under 10. | JDepend (free, open source) |

| Distance (D) | Distance from the idealized line of A + I = 1. The smaller the distance of your software from the idealized line, the better you are. Abstractness (A) = Na/Nc. Instability (I) = Ce / (Ce + Ca). | JDepend (free, open source) , Stan4J |

So, matrices are there and so are the thresholds and tools to report on them. If you read up the material I had quoted at the beginning of the article, you will find many more matrices. I can safely recommend the use of at least Sonar and the basic matrices that Sonar reports on. That is the very least that any enterprise grade software should have.

As I had mentioned in the first article of this sequence, start by measuring. Compare against the figures of the same project build on build and make small incremental changes. Small baby steps in the right direction taken diligently build on build will do wonders. Just don’t for the big kill, measure against the so called ‘industry standard’ and everything should be alright.

Beyond Matrices

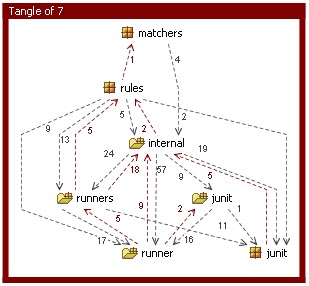

With all due respect to the matrices, their utility is limited to doing a health check on the existing code. Given the number of matrices and the plethora of tools to measure them (add conflicting views among technocrats about the efficacy of the tools and matrices) it soon gets confusing. It is like looking at admin panel with all dials and bulbs going berserk while you frantically try to figure out how to appease all. What you also need is a tool to analyse all these matrices and point out straightaway the complicated and vulnerable parts of your code. Of course it helps if it does so with an intuitive visual interface. There are a few software which does just this (unfortunately none of them is free). I have used and have quite liked Structure 101. It reports on ‘fat’ packages, classes, designs etc, which is it’s way of saying that it thinks that the ‘fat’ artifacts are excessively complex and hence tentative candidates to refactor / restructure. These artifacts generally have tangles (cyclic dependencies) and the tool does and excellent job of showing those.

That brings me to the end of this article. In conclusion, I just want to say, creating simple code is a complex business. It is not (only) labor. It is skill. And like all skills, mastering tools of the trade is important. Knowing which tools to pick from the free opensource basket and which ones to pay for (because they are worth it) is crucial.

In the next article we will talk about creating future state architecture for the project (assuming it is a long running support and upgrade project) and how to measure increased conformance of the code base to future state architecture, build by build.

Until then, happy coding.

Reference: Common sense and Code Quality – Part 1, Common sense and Code Quality – Part 2 from our JCG partner Partho at the Tech for Enterprise blog.