Introduction into GraalVM (Community Edition): GraalVM for JVM applications

1. Introduction

In this part of the tutorial we are going to continue our journey to learn more about the GraalVM project, focusing specifically on the integration with the JVM platform. In order to understand how GraalVM fits into the overall picture, we probably should start off by decomposing it into a set of building blocks.

Table Of Contents

2. Decomposing GraalVM

In the previous part of the tutorial we briefly discussed some of the key components GraalVM consists of, but surely there are more. Let us reiterate over them briefly.

- GraalVM compiler: written in Java, can integrate with the Java Java HotSpot VM or run standalone

- Substrate VM: ahead-of-time (AOT) compilation of Java/JVM applications into executable images or shared objects

- Truffle: language implementation framework for creating languages for GraalVM

- Sulong: an engine for running LLVM bitcode on GraalVM

- GraalWasm: an engine for running WebAssembly programs on GraalVM

- Tools: a number of tools to debug and monitor the applications on the GraalVM platform

Whereas we are going to talk about GraalVM polyglot capabilities later on, what interests us in the context of JVM integration are GraalVM compiler, Substrate VM and a subset of applicable tools. When you download the GraalVM distribution for the platform of your choice, you are getting most of these components bundled in. A fact that you may not be aware of is that the GraalVM compiler might be already available (but not activated by default) in the JDK distribution you are using today to run your applications and services.

3. Using GraalVM with OpenJDK

The ahead-of-time compilation capability (also known as JEP-295), jaotc tool, was firstly introduced in OpenJDK 9 release as an experimental feature. It was using GraalVM compiler as the code-generating backend. Shortly after, in the scope of JEP-317 (Experimental Java-Based JIT Compiler) shipped in OpenJDK 10 release, the GraalVM compiler itself became available as an experimental JIT compiler.

Following the six month release cycle, both OpenJDK 9 and OpenJDK 10 have reached their end-of-lifes a while ago. Realistically speaking, as of today, the majority of the JVM applications and services are still running on JDK 8 which basically means that jaotc and GraalVM compiler are out of reach (spoiler alert – more on this shortly) on most of the deployments.

But the chances are that you are running on LTS release, such as OpenJDK 11, or balancing on the cutting edge with OpenJDK 15 (the latest OpenJDK release available as of today). In any case, you are all set to benefit from GraalVM compiler but it is still marked as an experimental feature. In order to turn it on, it is sufficient to add the -XX:+UnlockExperimentalVMOptions -XX:+UseJVMCICompiler command line arguments to your JVM startup options.

$ java -XX:+UnlockExperimentalVMOptions -XX:+UseJVMCICompiler <...>

To be strict, we also have to add -XX:+EnableJVMCI but it is turned on automatically when -XX:+UseJVMCICompiler option is present. More importantly, the GraalVM compiler is not loaded as a native shared library since it is not present in the OpenJDK distributions by default. If you have it available, it could be enabled by means of using two additional command line options, -XX:+UseJVMCINativeLibrary and -XX:JVMCILibPath=<path>, introduced since the OpenJDK 13 release. Last but not least, please check JDK-8232118 for more ways to alter JVMCI command line arguments.

It is worth to note that JVMCI and the GraalVM compiler have a number of configurable options that could be specified by system properties prefixed with jvmci.* and graal.* respectively. These properties must be set in the JVM command line and the list of all supported properties could be retrieved by including -XX:+JVMCIPrintProperties command line argument.

$ java -XX:+UnlockExperimentalVMOptions -XX:+EnableJVMCI -XX:+JVMCIPrintProperties

But what if you are stuck with JDK 8? If the switch to a respective GraalVM distribution is not an option for you, please consider taking a look at the fork of jdk8u/hotspot with support for JVMCI. You should be able to find JVMCI-enabled distribution for your exact version of the JDK 8 or even build your own.

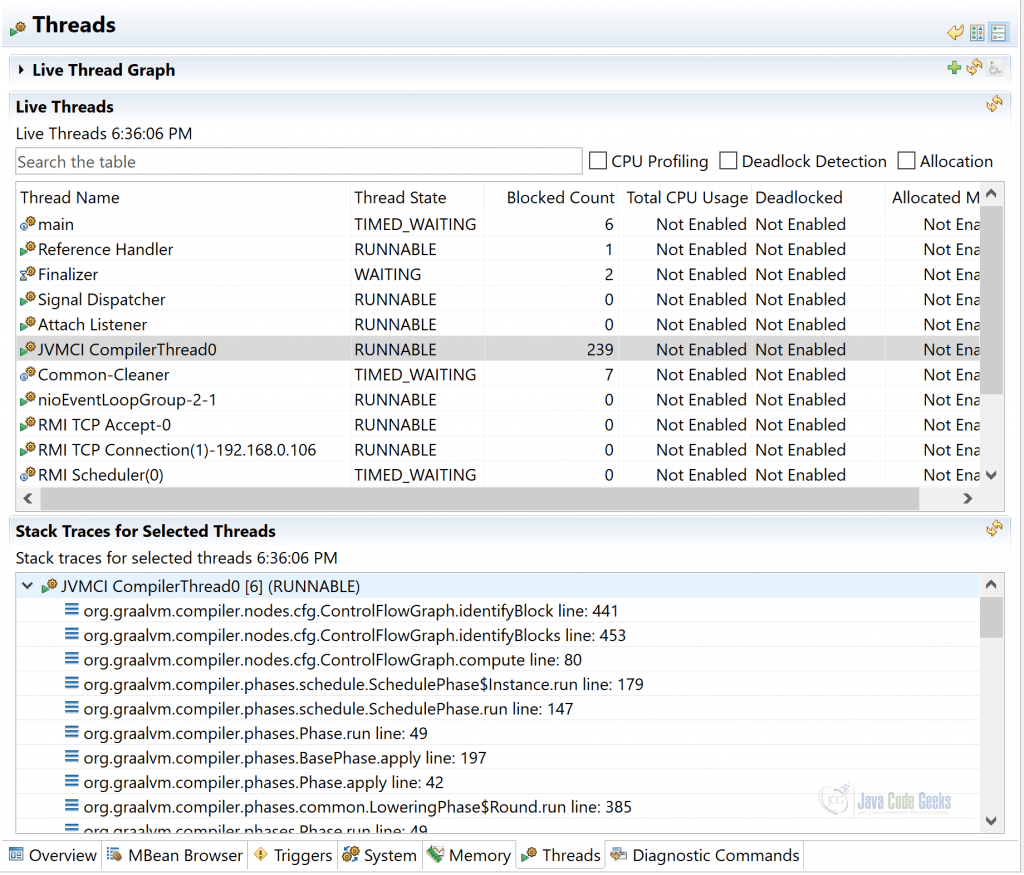

Bonus point, swapping the JIT compiler does not require any changes to the existing applications and/or services since they are expected to run fully compatible out-of-the-box. Still, GraalVM != JVM, it is better to perform comprehensive test suites in order to identify possible regressions or other issues. But first, how do you even know your JVM is actually using GraalVM compiler? There are many ways to collect the proof but probably the easiest and visually pleasant one is to use JDK Mission Control or VisualVM. While inspecting running JVM processes in question, you should be able to spot additional JVMCI Compiler threads and GraalVM compiler in their stack traces.

How the future of GraalVM integration with OpenJDK looks like? The plans are not clear yet but the major changes are already being baked into upcoming OpenJDK 16 release. The jaotc tool is gone and the further work on integrating Graal in the OpenJDK has moved to Project Metropolis. May be it is worth looking into GraalVM distributions after all.

4. Using GraalVM distribution

The 20.3.0 is the latest GraalVM Community release as of moment of this writing. It has two flavors: OpenJDK 8 and 11. The first one is based on OpenJDK version 1.8.272 whereas the second one on OpenJDK version 11.0.9. The respective distribution for the platform of your choice could be downloaded directly from the project’s official Github page.

Besides JVMCI support and libgraal, the GraalVM compiler compiled ahead-of-time into a native shared library, the distribution comes with:

- VisualVM which includes heap analysis features for the supported guest languages

- GraalVM Updater: a command line utility used to install and manage optional GraalVM language runtimes and utilities

Along the rest of the tutorial we are going to use GraalVM 20.3.0 with OpenJDK 11. The only thing you have to change is to point your JAVA_HOME environment variable to GraalVM distribution. The official documentation has a number of guides to help you get started.

For many JVM applications and services this could be quite sufficient but we are not going stop here. Let us talk about Substrate VM and ahead-of-time (AOT) compilation of Java/JVM applications into executable images or shared objects (collectively called native image).

5. Going Native

The ability to produce native executables or shared libraries is probably the most demanding feature of the GraalVM. What stays under the curtain? Let us try to figure out.

5.1. Substrate VM

If Substrate VM does not ring a bell, do not worry, it is unlikely you have heard about it. However, this is the driving force behind ahead-of-time (AOT) compilation and packaging native standalone executables of the JVM applications and services. But how come you may wonder?

Any code written for JVM needs JVM to be run. It does not only include JIT, but also a garbage collector, thread scheduling and many other runtime components. When JVM application or service is packaged as native executable, the JVM is not needed anymore. But all the same runtime components which are provided by the JVM are still required. This is exactly what Substrate VM does: it becomes a part of the native executable and serves as a provider of these necessary services.

Cool, at least one mystery of how native executables have become the real thing is uncovered. It is time to look at how we could build native images.

5.2. Native Image Builder

The GraalVM ecosystem comes with a tool called native-image, also known as native image builder. It is not part of the distribution but rather an optional component.

The Native Image builder or native-image is a utility that processes all classes of an application and their dependencies, including those from the JDK. It statically analyzes these data to determine which classes and methods are reachable during the application execution. Then it ahead-of-time compiles that reachable code and data to a native executable for a specific operating system and architecture. This entire process is called building an image (or the image build time) to clearly distinguish it from the compilation of Java source code to bytecode.

It could be installed with gu (aka GraalVM Updater), a command line utility used to install and manage optional GraalVM components.

$ gu install native-image

The native-image supports not only Java but all JVM-based languages. Before you get too excited, it is worth noting that not all applications or services could be packaged as native images (either executable or shared library). There are a number of limitations you should be aware of and for certain types of the applications / services going native may not be practical.

The official documentation covers the native-image in great details, and there are a lot of them to fair. We are not going to copy/paste all that but instead highlight the most interesting subjects and areas to pay particular attention to.

With native images, there is no JIT involved anymore. All the code is pre-compiled into machine instructions which depend on the target platform. What is interesting though, the native image generation process is separated into two phases: build time and run time. This is one of the reasons the command line arguments of the native-image executable are split into two distinct groups:

- Prefixed with

–H(hosted options): configure a native image build - Prefixed with

–R(runtime options): initial values are set during a native image build but could be overridden at runtime (usually with-XXprefix).

It is highly recommended to perform as much initialization as possible during the build phase so to reduce the startup time. By default, classes are initialized at image run time but this behavior is adjustable through --initialize-at-build-time or --initialize-at-run-time command line options. To track which classes were initialized and why please pass along handy -H:+PrintClassInitialization command line option.

Even being native executable, your application or service nonetheless consumes memory. The suitable heap settings will be determined automatically based on the system configuration and the used GC but it is still possible to provide your own configuration with the -Xmx, -Xms and -Xmn command line options at runtime.

$ <your native executable> -Xmx32m -Xms16m

In preference, the native-image executable gives you a choice to pre-set the same heap settings at build time using -R:MaxHeapSize, -R:MinHeapSize and -R:MaxNewSize command line options respectively.

$ native-image -R:MaxHeapSize=32m -R:MinHeapSize=16m ...

Thinking upfront about expected memory requirements is quite important since the default (and only) garbage collector (GC) available in GraalVM Community edition for native images is Serial GC. It is optimized for low memory footprint and small heap sizes. Also, some limitations apply: keep in mind that finalizers are not invoked. This particular feature has been deprecated long ago and is recommended to be replaced with weak references or/and reference queues.

The handling of the system properties needs its own mentions. You could use them with your native images the usual way, applying –D prefix, but remember the separation of the build and run stages:

- Passing

-D<key>=<value>to native-image executable affects properties seen at image build time - Passing

-D<key>=<value>to a native image executable affects properties seen at image run time

Since the GraalVM 20.3.0 release, native-image has become container aware: on Linux, resource limits like the processor count and available memory size are read from cgroup configurations. The processor count can also be overridden on the command line using the option (-XX:ActiveProcessorCount=N). Also, for containerized environments it is highly desirable to include the same signal handlers that JVM has. The --install-exit-handlers command line option gives us that.

Another thing, with native-image, only a subset of URL protocols is supported by default so you can start with a minimal footprint and add features later on. To enable HTTP and/or HTTPS protocols, you have to pass --enable-http and/or --enable-https command line arguments to native-image executable. Alternatively, you may prefer to use --enable-url-protocols command line option which accepts a fine-grained list of the URL protocols to enable.

The same reasoning to bundle only core security services by default is behind the Java Cryptography Architecture (JCA) support in native-image builder. Additional JCA security services must be enabled by including the --enable-all-security-services command line option (which is on by default when HTTPS URL protocol support is enabled).

A final remark about logging: out of the box, native-image supports logging capabilities using the java.util.logging APIs only.

Dealing with dynamic proxies, reflection and resources would probably cause the most headaches to the majority of the developers. Luckily, usage of the assisted configuration of native image builds could substantially reduce the efforts required to successfully package the existing applications and services as native images. It basically relies on Java agent which does the introspection at runtime and dumps all required configurations afterwards.

The native standalone executable is probably the kind of the native image you are going to produce most often however you could also package a shared library (if needed) by supplying the --shared command line argument to native-image builder.

5.3. Tooling

Most of the projects on JVM platform use Apache Maven, Gradle or SBT as the build orchestration tool. The generation of the native images as part of the build process is actually very well supported by all of them, thanks to the plugins ecosystem.

- Native Image Maven Plugin

- A Gradle plugin which creates native images

- Generate native-image binaries with sbt

Below is the snipped of the Apache Maven build definition which produces the native executable along with the regular JAR artifacts.

<plugin>

<groupId>org.graalvm.nativeimage</groupId>

<artifactId>native-image-maven-plugin</artifactId>

<version>20.3.0</version>

<executions>

<execution>

<goals>

<goal>native-image</goal>

</goals>

<phase>package</phase>

</execution>

</executions>

<configuration>

<mainClass>...</mainClass>

<imageName>...</imageName>

<buildArgs>...</buildArgs>

</configuration>

</plugin>

For many existing projects you may need to go beyond the simple configurations and exploit the advanced techniques like substitution of whole classes or methods during the image build. GraalVM provides a way to do that by bringing in the Substrate VM APIs.

<dependency>

<groupId>org.graalvm.nativeimage</groupId>

<artifactId>svm</artifactId>

<version>20.3.0</version>

<scope>provided</scope>

</dependency>

To benefit from GraalVM polyglot capabilities within existing applications and services, you could use an official GraalVM SDK, a collection of APIs for the components of GraalVM.

<dependency>

<groupId>org.graalvm.sdk</groupId>

<artifactId>graal-sdk</artifactId>

<version>20.3.0</version>

<scope>provided</scope>

</dependency>

Besides the leaders, it is very likely the build tool and/or framework of your choice are already providing pretty smooth experience with respect to native images and GraalVM in general. But in any case, you could always fall back to calling command line tools directly.

5.4. Debugging and Troubleshooting

Because the native-image builder generates a native binary, the latter does not include Java Virtual Machine Tool Interface (JVMTI) implementation and the standard JVM debugging techniques are not going to work. The native debuggers like gdb and others are the only options to understand what is going inside the native images at runtime. For the same reasons, the tools targeted to deal with JVM bytecode are not supported whatsoever.

In addition to runtime, you could troubleshoot the image build phase. It is sufficient to add --debug-attach[=<port>] command line option and attach the debugger during image generation.

To gain some insights on garbage collection, you could add the following command line options when executing a native image:

-XX:+PrintGC: print basic information for every garbage collection-XX:+VerboseGC: print further garbage collection details

What about the heap dumps you may ask? It is not possible to trigger heap dump creation using standard command line tools which come with OpenJDK, nor with JDK Mission Control or VisualVM. But you could use GraalVM SDK and implement heap dump generation from within your application or service programmatically.

6. Native or JIT?

Once you learn the way to package your Java applications and services as native images, here comes the enlightenment: why anyone should ever go back and use the JVM? As always, the answer to this question is “it depends” and we are going to summarize the pros and cons of both.

6.1. Native Image (SubstrateVM)

The benefits of running JVM applications and services as native executables are tremendous, but so are the drawbacks and limitations.

On the pros side:

- self-contained native executables

- very fast startup times

- smaller memory footprint

- smaller executable size

- more predictable performance

On the cons side:

- no JIT compiler, so peak performance may not be optimal

- only simple garbage collector (in case of community edition)

- not always easy to package a native image

- image builds are very slow (but time is improving)

- tools like JDK Flight Recorder (JFR) are not supported yet

- need to use native debuggers

So what kind of applications and services are going to benefit the most from being packaged as native image? The ones for which the following statements hold true:

- Startup time matters

- Memory footprint matters

- Small to medium-sized heaps (few gigabytes at most)

- All code is known ahead of time

- Optimal performance is not the main concern

If you are thinking about FaaS and serverless – these are probably the best examples to point out.

6.2. JVM with GraalVM Compiler

On the contrary side, let us enumerate the benefits and drawbacks of running JVM applications on the Java HotSpot VM but in tandem with GraalVM compiler.

On the pros side:

- It is just regular JVM

- Excellent peak performance (thanks to JIT optimizations)

- Large or small heap sizes

- A range of garbage collectors available

- A lot of familiar tooling

On the cons side:

- Usual footprint of a JVM (could be shrunk with jlink)

- Account for JVM startup times

- May take longer than C2 to reach peak performance

The sensible conclusions at this point, it is very luckily you are better off running your applications and services the traditional way (JVM with GraalVM compiler) unless there are obvious wins to go native.

6.3. What about GraalVM Compiler?

I hope the trade-offs of running your applications and services natively versus JVM/JIT are somewhat clarified. But we should not forget the fact that the same considerations apply to GraalVM compiler since it could be used as libgraal (as a precompiled native image) or jargraal (dynamically executed bytecode).

Although libgraal is the default and recommended mode of operations, please keep in mind that there are some consequences:

- Since this is native image, it may not reach the same peak performance as jargraal

- The size of the shared library is noticeable

From the other side, jargraal is not all roses and exposes a number of flaws as well:

- Being bytecode means it does interfere with the application bytecode, including heap, code caches, optimizations, …

- It may take time to get to the optimal mode of operations

A more direct measurement of the compilation speed could be unlocked with -XX:+CITime command line option. To follow the recommendations and stick to libgraal is probably wise decision nonetheless it is always good to understand what you are trading it for.

7. GraalVM and other JVMs

So far we have been always referring to OpenJDK and Java HotSpot VM as the platforms the GraalVM integrates with. What about other Java VMs, could you use GraalVM compiler with Eclipse OpenJ9 let say? Unfortunately, it seems to be not possible at the moment since the Eclipse OpenJ9 does not implement JVMCI just yet (the necessary prerequisite to plug GraalVM compiler in). The plans regarding possible JVMCI support are uncertain.

8. GraalVM VisualVM

The VisualVM tool was mentioned a number of times along this part of the tutorial. It is a great way to gain visual insights about the applications and services running on JVM. It used to be a part of the JDK distribution but was removed in Oracle JDK 9 and onwards.

Since then VisualVM has moved to the GraalVM and became available in two distributions: standalone (Java VisualVM) and bundled with GraalVM distribution. As the latter one, VisualVM adds a special support for the GraalVM polyglot features like analyzing the applications at the guest language level: JavaScript, Python, Ruby and R languages are supported.

9. GraalVM in Your IDE

If you download the GraalVM distribution, to your IDE it feels (and looks) like a regular OpenJDK distribution, no surprises here. In case of Apache Netbeans, IntelliJ Idea or Eclipse, the same experience of developing, running and debugging your JVM applications and services applies.

Additionally, the integration with Visual Studio Code is conveniently provided by GraalVM Extension (in a technology preview stage).

The major goal of creating GraalVM VS Code Extension is to enable a polyglot environment in VS Code, to make it suitable and convenient to work with GraalVM from an integrated development environment, to allow VS Code users to edit and debug applications written in any of the GraalVM supported dynamic languages (JS, Ruby, R, and Python).

From the personal experience, in recent years Visual Studio Code has gained a lot of traction across Java and Scala developers. It has become the default IDE for many and the presence of the dedicated GraalVM Extension just stacks up on other perks.

10. Case Studies

It is time to take a look at some widely popular projects in the JVM ecosystem and follow their route towards supporting GraalVM and native images in particular. Those are the well established brands with many years of active development behind, born at the time GraalVM has not even existed yet. Please do not hesitate to use them as the blueprints for your own application and services.

10.1. Netty

Netty is one of the foundational frameworks for building high performance network applications on the JVM. Its popularity has skyrocketed in the recent years, partly due to widespread adoption of the reactive programming paradigm.

Netty is an asynchronous event-driven network application framework for rapid development of maintainable high performance protocol servers & clients.

By all means Netty is among early supporters of GraalVM which in turn cleared out (or at least, simplified) the way for the upstream projects to follow the lead.

10.2. Spring

On the JVM, Spring platform was and still is a dominant technical stack for building all kinds of applications and services. It is very mature, battle-tested in production, its portfolio is very impressive and it is really hard to find any more or less popular project Spring has no integration with. That is a strength and weakness at the same time, particularly when core technical implementation decisions are in conflict with current GraalVM limitations.

As of today, the support of native images by Spring projects is marked as experimental and is hosted under the separate repository. It is implemented largely by leveraging the advanced substitution capabilities provided by Substrate VM APIs. Hopefully, by the time Spring Framework 6 sees the lights, the experimental label is going to be dropped, making native images support by Spring based applications and services ready for prime time.

11. The Road Ahead

The best way to wrap up our discussion it to touch upon the ongoing and future developments aimed to eliminate a number of existing limitations, especially associated with native images generation.

- Support for the Java Platform module system (JPMS): https://github.com/oracle/graal/issues/1962

- Support for method handles and the invokedynamic bytecode: https://github.com/oracle/graal/issues/2761

- Improve support for resource bundles and locales: https://github.com/oracle/graal/issues/2982

The pace of changes in GraalVM is extraordinary and it should not take long before we see at least some of them addressed in the next releases. Besides that, it is worth keeping an eye on the following ongoing work.

- Support for Java serialization: https://github.com/oracle/graal/pull/2730

- Support dynamic class loading: https://github.com/oracle/graal/pull/2442

- Add JDK Flight Recorder support for Java native images: https://github.com/oracle/graal/pull/3070

Those are just a selective picking out of hundreds of issues and pull requests. Help is always wanted, please feel free to join the community and start contributing!

12. What’s Next

In the next part of the tutorial we are going to talk about cloud computing, the challenges it posses to the JVM applications and services, how GraalVM is addressing those and discuss a new generation of the cloud-native libraries and frameworks.