Gentle Introduction to Hystrix

In the last few days I have been exploring the Netflix Hystrix library and have come to appreciate the features provided by this excellent library.

To quote from the Hystrix site:

Hystrix is a latency and fault tolerance library designed to isolate points of access to remote systems, services and 3rd party libraries, stop cascading failure and enable resilience in complex distributed systems where failure is inevitable.

There are a whole lot of keywords to parse here, however the best way to experience Hystrix in my mind is to try out a sample use case.

An unpredictable Service

Consider a service, an odd one, which takes a json message of the following structure and returns an acknowledgement:

{

"id":"1",

"payload": "Sample Payload",

"throw_exception":false,

"delay_by": 0

}The service takes in a payload, but additionally takes in two fields – delay_by which makes the service acknowledge a response after the delay in milliseconds and a “throw_exceptions” field which will result in an exception after the specified delay!

Here is a sample response:

{

"id":"1",

"received":"Sample Payload",

"payload":"Reply Message"

}If you are following along, here is my github repo with this sample, I have used Netflix Karyon 2 for this sample and the code which handles the request can be expressed very concisely the following way – see how the rx-java library is being put to good use here:

import com.netflix.governator.annotations.Configuration;

import rx.Observable;

import service1.domain.Message;

import service1.domain.MessageAcknowledgement;

import java.util.concurrent.TimeUnit;

public class MessageHandlerServiceImpl implements MessageHandlerService {

@Configuration("reply.message")

private String replyMessage;

public Observable<MessageAcknowledgement> handleMessage(Message message) {

logger.info("About to Acknowledge");

return Observable.timer(message.getDelayBy(), TimeUnit.MILLISECONDS)

.map(l -> message.isThrowException())

.map(throwException -> {

if (throwException) {

throw new RuntimeException("Throwing an exception!");

}

return new MessageAcknowledgement(message.getId(), message.getPayload(), replyMessage);

});

}

}At this point we have a good candidate service which can be made to respond with an arbitrary delay and failure.

A client to the Service

Now onto a client to this service. I am using Netflix Feign to make this call, yet another awesome library, all it requires is a java interface annotated the following way:

package aggregate.service;

import aggregate.domain.Message;

import aggregate.domain.MessageAcknowledgement;

import feign.RequestLine;

public interface RemoteCallService {

@RequestLine("POST /message")

MessageAcknowledgement handleMessage(Message message);

}It creates the necessary proxy implementing this interface using configuration along these lines:

RemoteCallService remoteCallService = Feign.builder()

.encoder(new JacksonEncoder())

.decoder(new JacksonDecoder())

.target(RemoteCallService.class, "http://127.0.0.1:8889");I have multiple endpoints which delegate calls to this remote client, all of them expose a url pattern along these lines – http://localhost:8888/noHystrix?message=Hello&delay_by=0&throw_exception=false, this first one is an example where the endpoint does not use Hystrix.

No Hystrix Case

As a first example, consider calls to the Remote service without Hystrix, if I were to try a call to http://localhost:8888/noHystrix?message=Hello&delay_by=5000&throw_exception=false or say to http://localhost:8888/noHystrix?message=Hello&delay_by=5000&throw_exception=true, in both instances the user request to the endpoints will simply hang for 5 seconds before responding.

There should be a few things immediately apparent here:

- If the service responds slowly, then the client requests to the service will be forced to wait for the response to come back.

- Under heavy load it is very likely that all threads handling user traffic will be exhausted, thus failing further user requests.

- If the service were to throw an exception, the client does not handle it gracefully.

Clearly there is a need for something like Hystrix which handles all these issues.

Hystrix command wrapping Remote calls

I conducted a small load test using a 50 user load on the previous case and got a result along these lines:

================================================================================ ---- Global Information -------------------------------------------------------- > request count 50 (OK=50 KO=0 ) > min response time 5007 (OK=5007 KO=- ) > max response time 34088 (OK=34088 KO=- ) > mean response time 17797 (OK=17797 KO=- ) > std deviation 8760 (OK=8760 KO=- ) > response time 50th percentile 19532 (OK=19532 KO=- ) > response time 75th percentile 24386 (OK=24386 KO=- ) > mean requests/sec 1.425 (OK=1.425 KO=- )

Essentially a 5 second delay from the service results in a 75th percentile time of 25 seconds!, now consider the same test with Hystrix command wrapping the service calls:

================================================================================ ---- Global Information -------------------------------------------------------- > request count 50 (OK=50 KO=0 ) > min response time 1 (OK=1 KO=- ) > max response time 1014 (OK=1014 KO=- ) > mean response time 22 (OK=22 KO=- ) > std deviation 141 (OK=141 KO=- ) > response time 50th percentile 2 (OK=2 KO=- ) > response time 75th percentile 2 (OK=2 KO=- ) > mean requests/sec 48.123 (OK=48.123 KO=- )

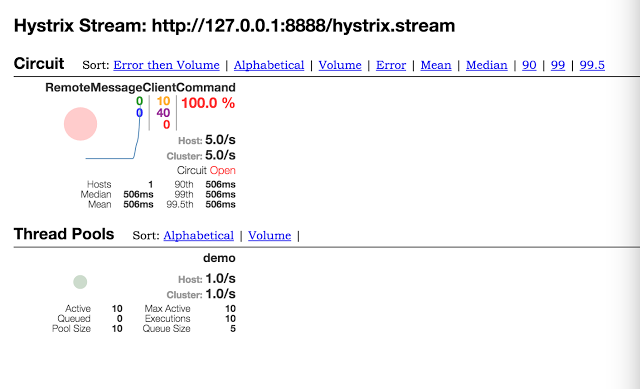

Strangely the 75th percentile time now is 2 millseconds!, how is this possible, and the answer becomes obvious using the excellent tools that Hystrix provides, here is a Hystrix dashboard view for this test:

What happened here is that the first 10 requests timed out, anything more than a second by default times out with Hystrix command in place, once the first ten transactions failed Hystrix short circuited the command thus blocking anymore requests to the remote service and hence the low response time. On why these transactions were not showing up as failed, this is because there is a fallback in place here which responds to the user request gracefully on failure.

Conclusion

The purpose here was to set the motivation for why a library like Hystrix is required, I will follow this up with the specifics of what is needed to integrate Hystrix into an application and the breadth of features that this excellent library provides.

| Reference: | Gentle Introduction to Hystrix from our JCG partner Biju Kunjummen at the all and sundry blog. |