9 Security mistakes every Java Developer must avoid

Checkmarx CxSAST is a powerful Source Code Analysis (SCA) solution designed for identifying, tracking and fixing technical and logical security flaws from the root: the source code. Check it out here!

Java has come a long way since it was introduced in mid-1995. Its cross-platform characteristics have made it the benchmark when it comes to client-side web programming. But with cybercrime and hackings reaching epidemic levels due to its widespread usage and distribution, the need for secure Java development has become the call of the hour.

A recent Kaspersky lab report mentions Java as the most attacked programming language, with more and more hacking incidents beingreported worldwide. Java’s susceptibility is largely due to its segmentation problem. Not all developers are using the latest version (Java 9), which basically means that the latest security updates are not always applied.

The growing consensus within application security circles is that high code integrity is the best way to make sure applications are robust and immune to the top hacking techniques. The following article contains 9 recommendations loosely based on the OWASP Java project, a comprehensive effort to help Java and J2EE developers produce robust applications.

9 Coding App Sec Malpractices Every Java Developer Must Avoid

AppSec Malpractice 1 – Not Restricting Access to Classes and Variables

A class, method or variable left public in Java is basically an open invitation to the bad guys. Make sure all of these are set to private by default. This automatically improves the robustness of your application code and clogs potential attack avenues. Accessors should be used to limit accessibility and non-private things should be documented clearly.

AppSec Malpractice 2 – Depending on Initialization

Developers should be aware of the fact that constructors are not compulsory to instantiate objects in Java. The existence of alternate methods to allocate non-initialized objects is a security issue that must be taken care of. The ideal solution is to program the classes in a way that the objects verify initialization before performing any action.

This can be achieved by following these steps:

- All variables should be made private. External code should be able to access these variables only via the secure get and set method.

- Each object should have the initialized private Boolean variable added to it.

- All non-constructor methods should be made to verify if the initialized is true before doing anything.

- If you are implementing static initializers, make sure all static variables are private and use classInitialized. Just like mentioned above, the static initializers (or constructers) must make sure classInitialized is true before doing anything.

AppSec Malpractice 3 – Not Finalizing Classes

Many Java developers forget to make classes (or methods) final. This is a malpractice that can potentially enable the hacker extend the class in a malicious manner. Declaring the class non-public and relying on the package scope restrictions for security can prove to be costly. You must make all classes final and document the unfinalized ones if really needed.

AppSec Malpractice No.4 – Relying on Package Scope

Another problem of medium severity in the Java programming language is the use of packages. These packages assist in the development process as its puts together classes, methods and variables for easy access. But hackers can potentially introduce rogue classes into your package, enabling them to access and modify the data located within it.

Hence, it’s advised to assume packages are not closed and potentially unsecure.

AppSec Malpractice No.5 – Minimize the Usage of Use Inner Classes

There has been a traditional practice of putting all privileged code into inner classes, which has been proven to be insecure. Java byte code basically has no notion of inner classes, which basically provide only a package-level security mechanism. To make matters worse, the inner class gets access to the fields of the outer class even if it’s declared private.

Inner classes reduce the size and complexity of the Java application code, arguably making it less buggy and more efficient. But these classes can be exploited by injecting byte code into the package. The best way to go is not to use inner classes altogether, but if doing so make sure they have been defined as private, just like the outer classes.

AppSec Malpractice No.6 – Hard Coding

One of the most common security blunders committed by Java developers is the hard-coding of sensitive information. This programming error involves the insertion of sensitive passwords, user IDs, PINs and other personal data inside the code. Sensitive information should be stored within a protected directory in the development tree.

AppSec Malpractice No.7 – Allow the Echoing Of Sensitive Data to the UI

The java.io package has no method to ensure the protection of sensitive data. The keytool application that comes by default with the JDK echoes user-input. Java developers should make sure thata fixed char is displayed for all keystrokes (commonly “*”). For example, javax.swing.JPasswordField can be used in Swing GUI applications.

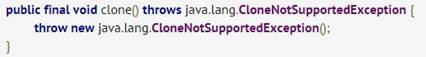

AppSec Malpractice No.8 – Not Paying Attention to Class Cloneability

The cloning mechanism available in Java can enable the hacker to create new instances of the classes you have defined. This can be performed without any constructed execution. Safe Java development is basically the turning of the objects to non-cloneable. This can be achieved with the help of the following piece of code:

If you want to go the cloning route, it’s possible to achieve overriding immunity by using the final keyword. The LOC should look like in the snippet below:

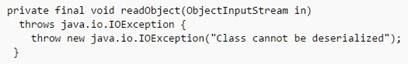

AppSec Malpractice No.9 – Overdoing of Serialization and Deserialization

Java has a mechanism that enables the serialization of objects (and vice versa). This is usually used to save objects when the Java Virtual machine (JVM) is “turned off”. But it’s an unsafe practice because adversaries can then access the internals of the objects and illegally harvest private/sensitive attributes. The writeobject method can be used to prevent serialization.

Developers should not serialize sensitive data, because then it’s out of the control of the Java Virtual Machine (JVM). If there is no workaround, the data should be encrypted.

The same thing applies for deserialization, which is used to construct objects from a stream of bytes. A malicious attacker can try to mimic a legitimate class and use this technique to instantiate an object’s state. A technique, similar to writeobject, is available to prevent deserialization. As shown below, this security method is called readobject.

The Secure SDLC & Static Code Analysis (SCA)

Unfortunately, even the securest of development guidelines and rules are not enough to ensure flawless Java application code. Developers’ errors and the numerous changes made to the code during development eventually lead to vulnerabilities and loopholes in the application code. But organizations should be able to detect these as soon as possible.

There are a wide range of application security solutions available in the market today, each offering their unique functionality and advantages. But the ideal solution should provide a comprehensive feature-set with the ability to be integrated into all stages of the Software Development Life Cycle (SDLC), if possible enabling automated testing for optimal results.

Static Code Analysis (SCA), belonging to the Static Application Security Testing (SAST) methodology, is one such solution. The benefits of this technique are many. They include:

- Creation of a secure SDLC (sSDLC) – With the scanning solution integrated into all stages of the development, application code can be scanned even before the build is achieved. Testing the source code enables the early mitigation of vulnerabilities and significantly speeds up the development process.

- Versatility – SCA can blend in seamlessly into virtually any development environment and model. This can be the traditional Waterfall methodology or the Continuous Integration (CICD) model.This testing solution is also effective in Agile and DevOps environments, thanks to its fast scanning speeds and accurate results.

- Optimal mitigation performance – SCA has the unique functionality of showing developers where exactly the detected vulnerability is located within the code, allowing a quick and effective fix. This security solution also scans a wide range of programming and scripting languages with wide framework compatibility.

- Wide vulnerability coverage – SCA covers a wide range of issues – starting from application layer vulnerabilities all the way to code flaws. Even advanced analysis is available. For example, business logic flaws can be detected by scanning the source code. This gives organizations and developers a comprehensive security solution.

- Return of Investment (ROI) – To sum it up, early vulnerability detection significantly shortens remediation times and enables faster development. This helps avoid delays and minimizes the need for resource-hogging maintenance procedures. In other words, organizations can save a lot of time and money.

Java application security can be achieved when developers and the security brass work hand in hand and take the steps mentioned above. While developers can and should practice safe coding and produce code with high integrity, CISOs and security officers should implement the right solution to seal off the loopholes and vulnerabilities as early as possible.

Only a proactive approach from all sides involved can help organizations create secure applications.

Checkmarx CxSAST is a powerful Source Code Analysis (SCA) solution designed for identifying, tracking and fixing technical and logical security flaws from the root: the source code. Check it out here!

is this “using the latest version (Java 9)” misprint?

Sorry to say, but it seems to me you do not know much of Java internals.

Making classes, methods, fields etc. private or final doesn’t protect you from “hackers” in any way. Just a few reflection tricks and even a static final field can be altered at runtime.

Check out the homepage of Heinz Kabutz (http://www.javaspecialists.eu/). He shows how to change private fields, even it static final. So your rules about private and final do not protect from being hacked.

I fail to see how any of the mistakes are related to security in any way. Security is about storing strings in encrypted store, safely parsing input from file/network and other similar things. How would me using less anonymous classes make my code more secure? Secure from what?

“When we are performing a code review on Java code, we should look at the following areas of concern. Getting developers to adopt leading practice techniques gives the inherent basic security features all code should have, “Self Defending Code”.” – OWASP

Dalibor, this article is loosely based on OWASP guidelines for secure Java coding. Above is a quote from their webpage. I recommend you don’t take these tips loosely. Of course this alone won’t stop hackers. Security tools like SCA can definitely help too.

Either way, thanks for reading and good luck.

There is something of this kind on https://www.owasp.org/index.php/Java_leading_security_practice I suppose it does apply when you host third party applications or plugins into your “container”. For instance, if you write an application server like Tomcat (used for Internet hosting service) and different third parties deploy their own applications, you MUST not permit to the hosted web apps to call some application server internal methods (i.e. application APP_A owned by Company_A, is not permitted to call a method asking Tomcat to undeploy APP_B owned by Company_B). Of course, we are not going to write an application server (besides, each company can use… Read more »

“Loosely based” is about the only accurate statement. Do you even understand how Java and web applications work?

One of the worse articles I have ever read…

This article promotes confusion about “security” of private members. If an atributte is private, code using private member cannot be compiled. But if I am an attacker I can workaround it and create compiled class using private atributte of foreigner class. It will work also after deploying, because access level is not checked at runtime, only at compile time.

More info on stack overflow:

http://stackoverflow.com/questions/9201603/are-private-members-really-more-secure-in-java

Even through it looks like hard to reach magick, every java beginer who try create modes to Minecraft knows this trick:

http://www.minecraftforge.net/wiki/Using_Access_Transformers

Does this author know anything about Java? This is by far the worst article I ever read here. None of these points have anything to do with security problems or flaws – maybe bad architecture, but that’s a different topic…

If a hacker has access to your binaries and applications – this means the hacker is on your network and has access to your servers!! – you won’t have to worry about private classes or members in your java code…

Yeah . perhaps the subject should be changed.

good tutorial

You’re kidding, right?

This also only applies when your Java binaries are available to a hacker, such as with an Applet or Web Start App. Typically a hacker cannot get access to your Java classes in a web application.

Nice. I have make a Mindmap with this points. See http://blog.wenzlaff.de/?p=5597

This article makes me consider revoking from JCG the right to republish my weekly articles. They should at least apologize!

Thanks for the article…

Sorry to say @Java Code Geeks but this article isn’t worth to be republished here.

The author seems not to have any kind of the necessary knowledge about Java and just want’s to write a advertisement for the (CsSAST).

Even if I understood that JCG may need advertisement (articles) I would expect that they are more in the sense and quality like the ones from Takipi.

I was very disappointed with this article. First the article incorrectly lists the latest current version of Java (as 9). Then, as mentioned by others, points 1-5 are moot because all those fields can be accessed by reflection. Then in item 5, there’s this: “Inner classes reduce the size and complexity of the Java application code, arguably making it less buggy and more efficient.” There are plenty of valid uses for inner classes, but reducing size and complexity is not one of them. The size of the code will be the same whether it’s in one file or another, and… Read more »

nice tutorial

http://www.javaworld.com/article/2076837/mobile-java/twelve-rules-for-developing-more-secure-java-code.html

Wow. Letting articles like this get published seriously erodes the credibility of this site. (And with the obtrusive pop-up ads, it’s already hard enough to like it)

The fuck, such hate in the comments. Kinda ungrateful for all these best practice tips.

To those saying you can use reflection anyway, yes you can but only if you didn’t instaniate a SecurityManager then you can access anything.

Shows how much the average joe dev knows about security O.o