Google Cloud Deploy – CD for a Java based project

This is a short write-up on using Google Cloud Deploy for Continuous Deployment of a Java-based project.

Google Cloud Deploy is a new entrant to the CD space. It facilitates a continuous deployment currently to GKE based targets and in future to other Google Cloud application runtime targets.

Let’s start with why such a tool is required, why not an automation tool like Cloud Build or Jenkins. In my mind it comes down to these things:

- State – a dedicated CD tool can keep state of the artifact, to the environments where the artifact is deployed. This way promotion of deployments, rollback to an older version, roll forward is easily done. Such an integration can be built into a CI tool but it will involve a lot of coding effort.

- Integration with the Deployment environment – a CD tools integrates well the target deployment platform without too much custom code needed.

Target Flow

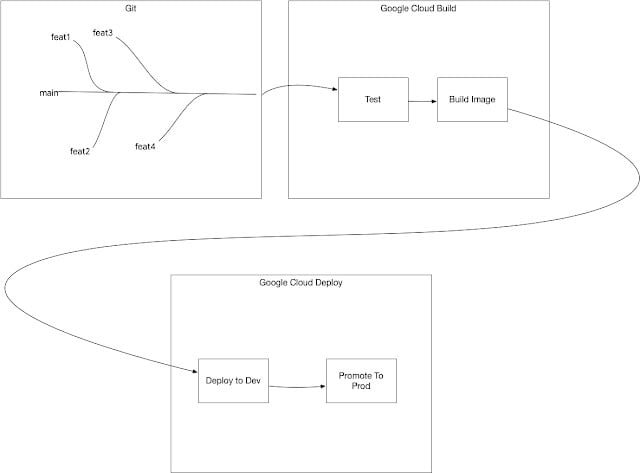

I am targeting a flow which looks like this, any merge to a “main” branch of a repository should:

1. Test and build an image

2. Deploy the image to a “dev” GKE cluster

3. The deployment can be promoted from the “dev” to the “prod” GKE cluster

Building an Image

Running the test and building the image is handled with a combination of Cloud Build providing the build automation environment and skaffold providing tooling through Cloud Native Buildpacks. It may be easier to look at the code repository to see how both are wired up – https://github.com/bijukunjummen/hello-skaffold-gke

Deploying the image to GKE

Now that an image has been baked, the next step is to deploy this into a GKE Kubernetes environment. Cloud Deploy has a declarative way of specifying the environments(referred to as Targets) and how to promote the deployment through the environments. A Google Cloud Deploy pipeline looks like this:

apiVersion: deploy.cloud.google.com/v1beta1

kind: DeliveryPipeline

metadata:

name: hello-skaffold-gke

description: Delivery Pipeline for a sample app

serialPipeline:

stages:

- targetId: dev

- targetId: prod

---

apiVersion: deploy.cloud.google.com/v1beta1

kind: Target

metadata:

name: dev

description: A Dev Cluster

gke:

cluster: projects/a-project/locations/us-west1-a/clusters/cluster-dev

---

apiVersion: deploy.cloud.google.com/v1beta1

kind: Target

metadata:

name: prod

description: A Prod Cluster

requireApproval: true

gke:

cluster: projects/a-project/locations/us-west1-a/clusters/cluster-prod

The pipeline is fairly easy to read. Target(s) describe the environments to deploy the image to and the pipeline shows how progression of the deployment across the environments is handled.

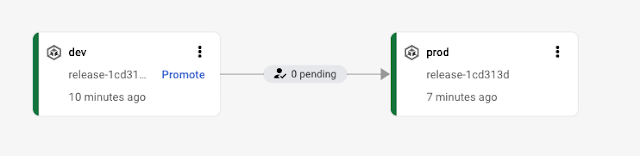

One thing to notice is that the “prod” target has been marked with a “requires approval” flag which is a way to ensure that the promotion to prod environment happens only with an approval. Cloud Deploy documentation has a good coverage of all these concepts. Also, there is a strong dependence on skaffold to generate the kubernetes manifests and deploying them to the relevant targets.

Given such a deployment pipeline, it can be put in place using:

gcloud beta deploy apply --file=clouddeploy.yaml --region=us-west1

Alright, now that the CD pipeline is in place, a “Release” can be triggered once the testing is completed in a “main” branch, a command which looks like this is integrated with the Cloud Build pipeline to do this, with a file pointing to the build artifacts:

gcloud beta deploy releases create release-01df029 --delivery-pipeline hello-skaffold-gke --region us-west1 --build-artifacts artifacts.json

This deploys the generated kubernetes manifests pointing to the right build artifacts to the “dev” environment

and can then be promoted to additional environments, prod in this instance.

Conclusion

This is a whirlwind tour of Google Cloud Deploy and the feature that it offers. It is still early days and I am excited to see where the Product goes. The learning curve is fairly steep, it is expected that a developer understands:

- Kubernetes, which is the only application runtime currently supported, expect other runtimes to be supported as the Product evolves.

- skaffold, which is used for building, tagging, generating kubernetes artifacts

- Cloud Build and its yaml configuration

- Google Cloud Deploys yaml configuration

It will get simpler as the Product matures.

Published on Java Code Geeks with permission by Biju Kunjummen, partner at our JCG program. See the original article here: Google Cloud Deploy – CD for a Java based project Opinions expressed by Java Code Geeks contributors are their own. |