Performance vs Reliability: Why Java Apps are Like F1 Cars

Think application performance and reliability is the same? Think again.

Are performance and reliability related? Or are these things mutually exclusive? I think the latter. Today, the reality is that IT sees application performance and reliability as the same thing, but that couldn’t be further away from the truth.

Let’s look at how Formula 1 teams manage performance and reliability.

Last season McLaren Honda were both slow and unreliable. Ferrari this season have been quick in qualifying but unreliable in the race. Mercedes on the other hand have been super quick and super reliable for the past two years kicking everyone’s ass.

Performance

An F1 car is typically influenced by three things – power unit, engine mapping, and aerodynamic drag/downforce.

An Engine map dictates how much resource a power unit consumes from the resources available (air, fuel and electricity). Aerodynamic drag/downforce is dictated by how airflow is managed around the car.

More power and low drag means less resistance, faster acceleration and higher top end speed.

More downforce means more grip/speed in the corners. Performance is all about how fast a F1 car laps a circuit. F1 teams over a typical weekend will make hundreds of changes to the car’s setup, hoping to unlock every tenth of a second so they can out-qualify and race their competitors.

Similarly, application performance is influenced by three things: JVM run-time, application logic and transaction flow.

Application logic consumes resource from the JVM runtime (threads, cpu, memory, …) and transaction flow is dictated by how many hops each transaction must make across the infrastructure components or 3rd party web services.

Performance is about timing end user requests (pages/transactions) and understanding the end-to-end latency between the application logic and transaction flow. Developers like F1 engineers will make hundreds of changes, hoping to optimize the end-user experience so the business benefits.

The primary unit of measurement for performance is response time, and as such, Application Performance Monitoring (APM) solutions like AppDynamics, New Relic and Dynatrace are top banana when it comes to managing this.

Reliability

An F1 car is typically influenced by the quality of its engineered components, the cars ECU and the million odd sensor inputs, parameters and functions.

A few unexpected parameters and the car will instantly stop. Happened to Nico Rosberg twice last year when his steering wheel and electronics froze on the grid, all this despite having the fastest car by some margin.

Troubleshooting the performance of an F1 car is very different to troubleshooting its reliability, they are somewhat different use cases that require different telemetry, tools and perspectives. Reliability is about understanding why things break vs. why things run slow.

It’s the same deal with applications, only when an application craps out, it’s because application logic has failed somewhere, causing an error or exception to be thrown. This is very different to application logic running slow.

Application logic takes input, processes it and creates some kind of output. Like F1 cars, applications have thousands of components (functions) with millions of lines of code that each process a few hundred thousand parameters (objects and variables) at any one time. Performance is irrelevant without reliability. Log files are where errors and exceptions live.

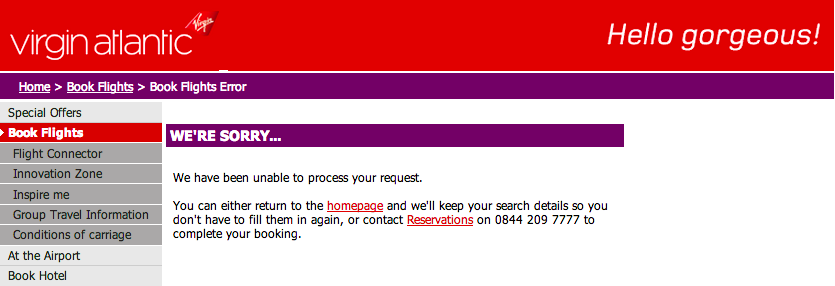

Question: Is a slow flight search more or less important than a flight booking error?

Answer: they both kill the business so you need to manage both.

Welcome to the World of Crap Data

Assuming those APM solutions do a mighty fine job managing performance. Our industry is still convinced that log files (or big data as some vendors call it) is the answer to understanding why applications fail. I would actually call this approach more like ‘crap data’.

Log files lack depth, context and insight to anyone who really wants to find the real root cause of an application failure. Sure, log files are better than nothing but let’s look at what data a developer needs in order to consistently find the root cause:

- Application Stack Trace – showing which application component (class/method) were part of a failure

- Application Source Code – showing the line of code that caused the failure

- Application State – showing the application parameters (objects, variables and values) that were processed by the component/source code

Most log files today will contain millions of duplicated application stack traces. This is why Splunk is worth six billion dollars because every duplicated stack trace costs $$$ to parse, index, store and search.

Yes, developers can customize application logs to put whatever data they want in them. The bad news is that developers can’t log everything due to overhead, and creating meaningful logs often requires knowing what will break in the application.

Without a crystal ball it’s impossible to create rich effective log files – that is why teams still spend hours or days looking for that needle in the haystack. No application source code or state means operations and development must guess. This is bad. Unfortunately, a stack trace isn’t enough. In F1 this would be like the Mercedes pit crew telling their engineers “Our telemetry just confirmed that Nico’s steering wheel is broken, that’s the only telemetry we’ve got – can you figure out why please and fix it ASAP”.

Can you imagine what the engineers might think? Unfortunately, this is what most developers think today when they’re informed that something has failed in the application.

The good news is that It’s now possible to know WHEN and WHY application code breaks in production. Welcome to Takipi.

What shouldn’t be possible is now possible, and it’s the end for log files.

| Reference: | Performance vs Reliability: Why Java Apps are Like F1 Cars from our JCG partner Steve Burton at the Takipi blog. |