HotSpot GC Thread CPU footprint on Linux

The following question will test your knowledge on garbage collection and high CPU troubleshooting for Java applications running on Linux OS. This troubleshooting technique is especially crucial when investigating excessive GC and / or CPU utilization. It will assume that you do not have access to advanced monitoring tools such as Compuware dynaTrace or even JVisualVM. Future tutorials using such tools will be presented in the future but please ensure that you first master the base troubleshooting principles.

Question:

How can you monitor and calculate how much CPU % each of the Oracle HotSpot or JRockit JVM garbage collection (GC) threads is using at runtime on Linux OS?

Answer:

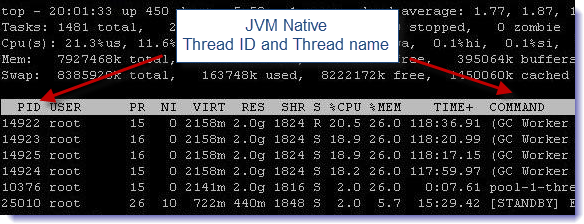

On the Linux OS, Java threads are implemented as native Threads, which results in each thread being a separate Linux process. This means that you are able to monitor the CPU % of any Java thread created by the HotSpot JVM using the top –H command (Threads toggle view).

That said, depending of the GC policy that you are using and your server specifications, the HotSpot & JRockit JVM will create a certain number of GC threads that will be performing young and old space collections. Such threads can be easily identified by generating a JVM thread dump. As you can see below in our example, the Oracle JRockit JVM did create 4 GC threads identified as “(GC Worker Thread X)”.

===== FULL THREAD DUMP =============== Fri Nov 16 19:58:36 2012 BEA JRockit(R) R27.5.0-110-94909-1.5.0_14-20080204-1558-linux-ia32 "Main Thread" id=1 idx=0x4 tid=14911 prio=5 alive, in native, waiting -- Waiting for notification on: weblogic/t3/srvr/T3Srvr@0xfd0a4b0[fat lock] at jrockit/vm/Threads.waitForNotifySignal(JLjava/lang/Object;)Z(Native Method) at java/lang/Object.wait(J)V(Native Method) at java/lang/Object.wait(Object.java:474) at weblogic/t3/srvr/T3Srvr.waitForDeath(T3Srvr.java:730) ^-- Lock released while waiting: weblogic/t3/srvr/T3Srvr@0xfd0a4b0[fat lock] at weblogic/t3/srvr/T3Srvr.run(T3Srvr.java:380) at weblogic/Server.main(Server.java:67) at jrockit/vm/RNI.c2java(IIIII)V(Native Method) -- end of trace "(Signal Handler)" id=2 idx=0x8 tid=14920 prio=5 alive, in native, daemon "(GC Main Thread)" id=3 idx=0xc tid=14921 prio=5 alive, in native, native_waiting, daemon "(GC Worker Thread 1)" id=? idx=0x10 tid=14922 prio=5 alive, in native, daemon "(GC Worker Thread 2)" id=? idx=0x14 tid=14923 prio=5 alive, in native, daemon "(GC Worker Thread 3)" id=? idx=0x18 tid=14924 prio=5 alive, in native, daemon "(GC Worker Thread 4)" id=? idx=0x1c tid=14925 prio=5 alive, in native, daemon ………………………

Now let’s put all of these principles together via a simple example.

Step #1 – Monitor the GC thread CPU utilization

The first step of the investigation is to monitor and determine:

- Identify the native Thread ID for each GC worker thread shown via the Linux top –H command.

- Identify the CPU % for each GC worker thread.

Step #2 – Generate and analyze JVM Thread Dumps

At the same time of Linux top –H, generate 2 or 3 JVM Thread Dump snapshots via kill -3 <Java PID>.

- Open the JVM Thread Dump and locate the JVM GC worker threads.

- Now correlate the “top -H” output data with the JVM Thread Dump data by looking at the native thread id (tid attribute).

As you can see in our example, such analysis did allow us to determine that all our GC worker threads were using around 20% CPU each. This was due to major collections happening at that time. Please note that it is also very useful to enable verbose:gc as it will allow you to correlate such CPU spikes with minor and major collections and determine how much your JVM GC process is contributing to the overall server CPU utilization.